Tutorial 1: Un/Self-supervised learning methods#

Week 3, Day 3: Unsupervised and self-supervised learning

By Neuromatch Academy

Content creators: Arna Ghosh, Colleen Gillon, Tim Lillicrap, Blake Richards

Content reviewers: Atnafu Lambebo, Hadi Vafaei, Khalid Almubarak, Melvin Selim Atay, Kelson Shilling-Scrivo

Content editors: Anoop Kulkarni, Spiros Chavlis

Production editors: Deepak Raya, Gagana B, Spiros Chavlis

Tutorial Objectives#

In this tutorial, you will learn about the importance of learning good representations of data.

Specific objectives for this tutorial:

Train logistic regressions (A) directly on input data and (B) on representations learned from the data.

Compare the classification performances achieved by the different networks.

Compare the representations learned by the different networks.

Identify the advantages of self-supervised learning over supervised or traditional unsupervised methods.

Setup#

Install dependencies#

Show code cell source

# @title Install dependencies

# @markdown Download dataset, modules, and files needed for the tutorial from GitHub.

# @markdown This cell will download the library from OSF, but you can check out the code in https://github.com/colleenjg/neuromatch_ssl_tutorial.git

import os, sys, shutil, importlib

REPO_PATH = "neuromatch_ssl_tutorial"

download_str = "Downloading"

if os.path.exists(REPO_PATH):

download_str = "Redownloading"

shutil.rmtree(REPO_PATH)

# Download from github repo directly

# !git clone git://github.com/colleenjg/neuromatch_ssl_tutorial.git --quiet

from io import BytesIO

from urllib.request import urlopen

from zipfile import ZipFile

zipurl = 'https://osf.io/smqvg/download'

print(f"{download_str} and unzipping the file... Please wait.")

with urlopen(zipurl) as zipresp:

with ZipFile(BytesIO(zipresp.read())) as zfile:

zfile.extractall()

# Correct now-broken use of deprecated np.product method

for module in ["data.py", "load.py", "models.py"]:

with open(f"neuromatch_ssl_tutorial/modules/{module}", "r") as f:

source = f.read()

source = source.replace("np.product(", "np.prod(")

with open(f"neuromatch_ssl_tutorial/modules/{module}", "w") as f:

f.write(source)

print("Download completed!")

Downloading and unzipping the file... Please wait.

---------------------------------------------------------------------------

HTTPError Traceback (most recent call last)

Cell In[2], line 24

22 zipurl = 'https://osf.io/smqvg/download'

23 print(f"{download_str} and unzipping the file... Please wait.")

---> 24 with urlopen(zipurl) as zipresp:

25 with ZipFile(BytesIO(zipresp.read())) as zfile:

26 zfile.extractall()

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:214, in urlopen(url, data, timeout, cafile, capath, cadefault, context)

212 else:

213 opener = _opener

--> 214 return opener.open(url, data, timeout)

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:523, in OpenerDirector.open(self, fullurl, data, timeout)

521 for processor in self.process_response.get(protocol, []):

522 meth = getattr(processor, meth_name)

--> 523 response = meth(req, response)

525 return response

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:632, in HTTPErrorProcessor.http_response(self, request, response)

629 # According to RFC 2616, "2xx" code indicates that the client's

630 # request was successfully received, understood, and accepted.

631 if not (200 <= code < 300):

--> 632 response = self.parent.error(

633 'http', request, response, code, msg, hdrs)

635 return response

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:555, in OpenerDirector.error(self, proto, *args)

553 http_err = 0

554 args = (dict, proto, meth_name) + args

--> 555 result = self._call_chain(*args)

556 if result:

557 return result

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:494, in OpenerDirector._call_chain(self, chain, kind, meth_name, *args)

492 for handler in handlers:

493 func = getattr(handler, meth_name)

--> 494 result = func(*args)

495 if result is not None:

496 return result

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:747, in HTTPRedirectHandler.http_error_302(self, req, fp, code, msg, headers)

744 fp.read()

745 fp.close()

--> 747 return self.parent.open(new, timeout=req.timeout)

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:523, in OpenerDirector.open(self, fullurl, data, timeout)

521 for processor in self.process_response.get(protocol, []):

522 meth = getattr(processor, meth_name)

--> 523 response = meth(req, response)

525 return response

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:632, in HTTPErrorProcessor.http_response(self, request, response)

629 # According to RFC 2616, "2xx" code indicates that the client's

630 # request was successfully received, understood, and accepted.

631 if not (200 <= code < 300):

--> 632 response = self.parent.error(

633 'http', request, response, code, msg, hdrs)

635 return response

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:561, in OpenerDirector.error(self, proto, *args)

559 if http_err:

560 args = (dict, 'default', 'http_error_default') + orig_args

--> 561 return self._call_chain(*args)

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:494, in OpenerDirector._call_chain(self, chain, kind, meth_name, *args)

492 for handler in handlers:

493 func = getattr(handler, meth_name)

--> 494 result = func(*args)

495 if result is not None:

496 return result

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:641, in HTTPDefaultErrorHandler.http_error_default(self, req, fp, code, msg, hdrs)

640 def http_error_default(self, req, fp, code, msg, hdrs):

--> 641 raise HTTPError(req.full_url, code, msg, hdrs, fp)

HTTPError: HTTP Error 308: Permanent Redirect

Install and import feedback gadget#

Show code cell source

# @title Install and import feedback gadget

!pip3 install vibecheck datatops --quiet

from vibecheck import DatatopsContentReviewContainer

def content_review(notebook_section: str):

return DatatopsContentReviewContainer(

"", # No text prompt

notebook_section,

{

"url": "https://pmyvdlilci.execute-api.us-east-1.amazonaws.com/klab",

"name": "neuromatch_dl",

"user_key": "f379rz8y",

},

).render()

feedback_prefix = "W3D3_T1"

# Imports

import torch

import torchvision

import numpy as np

import matplotlib.pyplot as plt

# Import modules designed for use in this notebook.

from neuromatch_ssl_tutorial.modules import data, load, models, plot_util

from neuromatch_ssl_tutorial.modules import data, load, models, plot_util

importlib.reload(data)

importlib.reload(load)

importlib.reload(models)

importlib.reload(plot_util)

---------------------------------------------------------------------------

ModuleNotFoundError Traceback (most recent call last)

Cell In[4], line 8

5 import matplotlib.pyplot as plt

7 # Import modules designed for use in this notebook.

----> 8 from neuromatch_ssl_tutorial.modules import data, load, models, plot_util

9 from neuromatch_ssl_tutorial.modules import data, load, models, plot_util

10 importlib.reload(data)

ModuleNotFoundError: No module named 'neuromatch_ssl_tutorial'

Figure settings#

Show code cell source

# @title Figure settings

import logging

logging.getLogger('matplotlib.font_manager').disabled = True

import ipywidgets as widgets # Interactive display

%matplotlib inline

%config InlineBackend.figure_format = 'retina'

plt.style.use("https://raw.githubusercontent.com/NeuromatchAcademy/content-creation/main/nma.mplstyle")

plt.rc('axes', unicode_minus=False) # To ensure negatives render correctly with xkcd style

import warnings

warnings.filterwarnings("ignore")

Plotting functions#

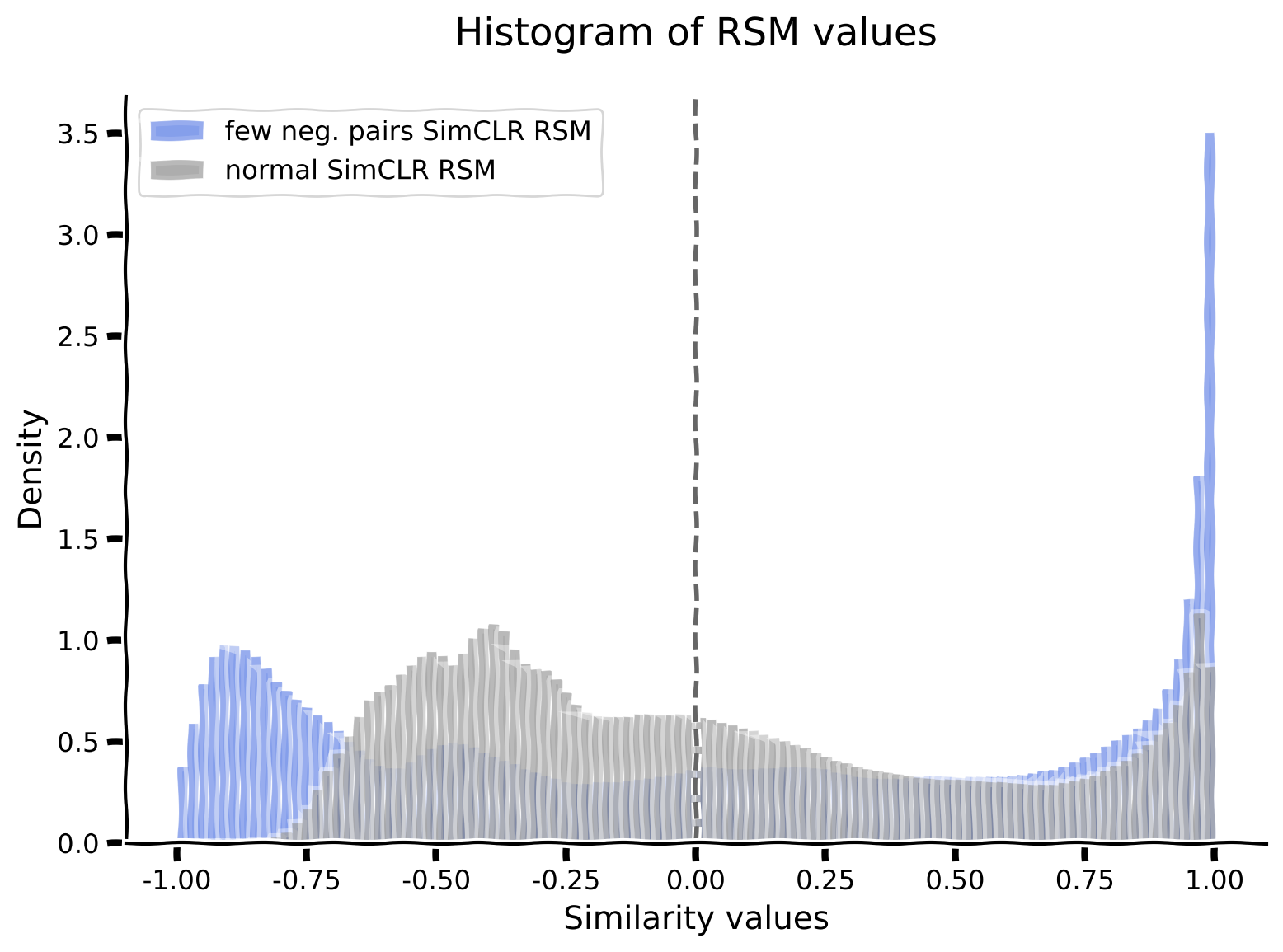

Function to plot a histogram of RSM values: plot_rsm_histogram(rsms, colors)

Show code cell source

# @title Plotting functions

# @markdown Function to plot a histogram of RSM values: `plot_rsm_histogram(rsms, colors)`

def plot_rsm_histogram(rsms, colors, labels=None, nbins=100):

"""

Function to plot histogram based on Representational Similarity Matrices

Args:

rsms: List

List of values within RSM

colors: List

List of colors for histogram

labels: List

List of RSM Labels

nbins: Integer

Specifies number of histogram bins

Returns:

Nothing

"""

fig, ax = plt.subplots(1)

ax.set_title("Histogram of RSM values", y=1.05)

min_val = np.min([np.nanmin(rsm) for rsm in rsms])

max_val = np.max([np.nanmax(rsm) for rsm in rsms])

bins = np.linspace(min_val, max_val, nbins+1)

if labels is None:

labels = [labels] * len(rsms)

elif len(labels) != len(rsms):

raise ValueError("If providing labels, must provide as many as RSMs.")

if len(rsms) != len(colors):

raise ValueError("Must provide as many colors as RSMs.")

for r, rsm in enumerate(rsms):

ax.hist(

rsm.reshape(-1), bins, density=True, alpha=0.4,

color=colors[r], label=labels[r]

)

ax.axvline(x=0, ls="dashed", alpha=0.6, color="k")

ax.set_ylabel("Density")

ax.set_xlabel("Similarity values")

ax.legend()

plt.show()

Helper functions#

Show code cell source

# @title Helper functions

from IPython.display import display, Image # to visualize images

# @markdown Function to set test custom torch RSM function: `test_custom_torch_RSM_fct()`

def test_custom_torch_RSM_fct(custom_torch_RSM_fct):

"""

Function to set test implementation of custom_torch_RSM_fct

Args:

custom_torch_RSM_fct: f_name

Function to test

Returns:

Nothing

"""

rand_feats = torch.rand(100, 1000)

RSM_custom = custom_torch_RSM_fct(rand_feats)

RSM_ground_truth = data.calculate_torch_RSM(rand_feats)

if torch.allclose(RSM_custom, RSM_ground_truth, equal_nan=True):

print("custom_torch_RSM_fct() is correctly implemented.")

else:

print("custom_torch_RSM_fct() is NOT correctly implemented.")

# @markdown Function to set test custom contrastive loss function: `test_custom_contrastive_loss_fct()`

def test_custom_contrastive_loss_fct(custom_simclr_contrastive_loss):

"""

Function to set test implementation of custom_simclr_contrastive_loss

Args:

custom_simclr_contrastive_loss: f_name

Function to test

Returns:

Nothing

"""

rand_proj_feat1 = torch.rand(100, 1000)

rand_proj_feat2 = torch.rand(100, 1000)

loss_custom = custom_simclr_contrastive_loss(rand_proj_feat1, rand_proj_feat2)

loss_ground_truth = models.contrastive_loss(rand_proj_feat1,rand_proj_feat2)

if torch.allclose(loss_custom, loss_ground_truth):

print("custom_simclr_contrastive_loss() is correctly implemented.")

else:

print("custom_simclr_contrastive_loss() is NOT correctly implemented.")

Set random seed#

Executing set_seed(seed=seed) you are setting the seed

Show code cell source

# @title Set random seed

# @markdown Executing `set_seed(seed=seed)` you are setting the seed

# For DL its critical to set the random seed so that students can have a

# baseline to compare their results to expected results.

# Read more here: https://pytorch.org/docs/stable/notes/randomness.html

# Call `set_seed` function in the exercises to ensure reproducibility.

import random

import torch

def set_seed(seed=None, seed_torch=True):

"""

Handles variability by controlling sources of randomness

through set seed values

Args:

seed: Integer

Set the seed value to given integer.

If no seed, set seed value to random integer in the range 2^32

seed_torch: Bool

Seeds the random number generator for all devices to

offer some guarantees on reproducibility

Returns:

Nothing

"""

if seed is None:

seed = np.random.choice(2 ** 32)

random.seed(seed)

np.random.seed(seed)

if seed_torch:

torch.manual_seed(seed)

torch.cuda.manual_seed_all(seed)

torch.cuda.manual_seed(seed)

torch.backends.cudnn.benchmark = False

torch.backends.cudnn.deterministic = True

print(f'Random seed {seed} has been set.')

# In case that `DataLoader` is used

def seed_worker(worker_id):

"""

DataLoader will reseed workers following randomness in

multi-process data loading algorithm.

Args:

worker_id: integer

ID of subprocess to seed. 0 means that

the data will be loaded in the main process

Refer: https://pytorch.org/docs/stable/data.html#data-loading-randomness for more details

Returns:

Nothing

"""

worker_seed = torch.initial_seed() % 2**32

np.random.seed(worker_seed)

random.seed(worker_seed)

Set device (GPU or CPU). Execute set_device()#

Show code cell source

# @title Set device (GPU or CPU). Execute `set_device()`

# especially if torch modules used.

# Inform the user if the notebook uses GPU or CPU.

def set_device():

"""

Set the device. CUDA if available, CPU otherwise

Args:

None

Returns:

Nothing

"""

device = "cuda" if torch.cuda.is_available() else "cpu"

if device != "cuda":

print("WARNING: For this notebook to perform best, "

"if possible, in the menu under `Runtime` -> "

"`Change runtime type.` select `GPU` ")

else:

print("GPU is enabled in this notebook.")

return device

# Set global variables

SEED = 2021

set_seed(seed=SEED)

DEVICE = set_device()

Random seed 2021 has been set.

WARNING: For this notebook to perform best, if possible, in the menu under `Runtime` -> `Change runtime type.` select `GPU`

Pre-load variables (allows each section to be run independently)#

Show code cell source

# @markdown ### Pre-load variables (allows each section to be run independently)

# Section 1

dSprites = data.dSpritesDataset(

os.path.join(REPO_PATH, "dsprites", "dsprites_subset.npz")

)

dSprites_torchdataset = data.dSpritesTorchDataset(

dSprites,

target_latent="shape"

)

train_sampler, test_sampler = data.train_test_split_idx(

dSprites_torchdataset,

fraction_train=0.8,

randst=SEED

)

supervised_encoder = load.load_encoder(REPO_PATH,

model_type="supervised",

verbose=False)

# Section 2

custom_torch_RSM_fct = None # Default is used instead

# Section 3

random_encoder = load.load_encoder(REPO_PATH,

model_type="random",

verbose=False)

# Section 4

vae_encoder = load.load_encoder(REPO_PATH,

model_type="vae",

verbose=False)

# Section 5

invariance_transforms = torchvision.transforms.RandomAffine(

degrees=90,

translate=(0.2, 0.2),

scale=(0.8, 1.2)

)

dSprites_invariance_torchdataset = data.dSpritesTorchDataset(

dSprites,

target_latent="shape",

simclr=True,

simclr_transforms=invariance_transforms

)

# Section 6

simclr_encoder = load.load_encoder(REPO_PATH,

model_type="simclr",

verbose=False)

Section 0: Introduction#

Video 0: Introduction#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Introduction_Video")

Section 1: Representations are important#

Time estimate: ~30mins

Video 1: Why do representations matter?#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Why_do_representations_matter_Video")

Section 1.1: Introducing the dSprites dataset#

In this tutorial, we will be using a subset of the openly available dSprites dataset to investigate the importance of learning good representations.

Note on dataset: For convenience, we will be using a subset of the original, full dataset which is available here, on GitHub.

Interactive Demo 1.1.1: Exploring the dSprites dataset#

In this first demo, we will get to know the dSprites dataset. This dataset is made up of black and white images (20,000 images total in the subset we are using).

The images in the dataset can be described using different combinations of latent dimension values, sampled from:

Shapes (3): square (1.0), oval (2.0) or heart (3.0)

Scales (6): 0.5 to 1.0

Orientations (40): 0 to 2\(\pi\)

Positions in X (32): 0 to 1 (left to right)

Positions in Y (32): 0 to 1 (top to bottom)

As a result, each image carries 5 labels. One for each of the latent dimensions.

We will first load the dataset into the dSprites object, which is an instance of the data.dSpritesDataset class.

dSprites = data.dSpritesDataset(

os.path.join(REPO_PATH, "dsprites", "dsprites_subset.npz")

)

Next, we use the dSpritesDataset class method show_images() to plot a few images from the dataset, with their latent dimension values printed below.

Interactive Demo: View a different set of randomly sampled images by passing the random state argument randst any integer or the value None. (The original setting is randst=SEED.)

# DEMO: To view different images, set randst to any integer value.

dSprites.show_images(num_images=10, randst=SEED)

To better understand the posX and posY latent dimensions (which will be most relevant in Bonus 2), we plot the images with some annotations. The annotations (in red) do not modify the actual images; they are added purely for visualization purposes, and show:

the edges of the

posXandposYspans, andthe center, i.e.,

(posX, posY), for each shape.

Note on shape positions: Notice that all shape centers are positioned within the area marked by the red square. posX and posY actually describe the relative position of the center of a shape within this area: posX=0 (left) to posX=1 (right), and posY=0 (top) to posY=1 (bottom). No shape center appears outside, in the buffer area. This choice in the dSprites dataset design ensures that shapes of different scales and rotations all appear fully.

# DEMO: To view different images, set randst to any integer value.

dSprites.show_images(num_images=10, randst=SEED, annotations="pos")

Section 1.2: Training a classifier with and without representations#

Now, we will investigate how 2 different types of classifiers perform when trained to decode the shape latent dimension of images in the dSprites dataset.

Specifically, we will train one classifier directly on the images, and another on the output of an encoder network.

The encoder network we will use here and throughout the tutorial is the multi-layer convolutional network, pictured below. It comprises 2 consecutive convolutional layers, followed by 3 fully connected layers, and uses average pooling and batch normalization between layers, as well as rectified linear units as non-linearities.

The classifier layer then takes the encoder features as input, predicting, for example, the shape latent dimension of encoded input images.

Note on terminology: In this tutorial, both the terms representations and features are used to refer to the data embeddings learned in the final layer of the encoder network (of dimension 1x84, and indicated by a red dashed box) which are fed to the classifiers.

Encoder network schematic#

Show code cell source

# @markdown ### Encoder network schematic

Image(filename=os.path.join(REPO_PATH, "images", "feat_encoder_schematic.png"), width=1200)

---------------------------------------------------------------------------

FileNotFoundError Traceback (most recent call last)

Cell In[19], line 2

1 # @markdown ### Encoder network schematic

----> 2 Image(filename=os.path.join(REPO_PATH, "images", "feat_encoder_schematic.png"), width=1200)

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/IPython/core/display.py:970, in Image.__init__(self, data, url, filename, format, embed, width, height, retina, unconfined, metadata, alt)

968 self.unconfined = unconfined

969 self.alt = alt

--> 970 super(Image, self).__init__(data=data, url=url, filename=filename,

971 metadata=metadata)

973 if self.width is None and self.metadata.get('width', {}):

974 self.width = metadata['width']

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/IPython/core/display.py:327, in DisplayObject.__init__(self, data, url, filename, metadata)

324 elif self.metadata is None:

325 self.metadata = {}

--> 327 self.reload()

328 self._check_data()

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/IPython/core/display.py:1005, in Image.reload(self)

1003 """Reload the raw data from file or URL."""

1004 if self.embed:

-> 1005 super(Image,self).reload()

1006 if self.retina:

1007 self._retina_shape()

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/IPython/core/display.py:353, in DisplayObject.reload(self)

351 if self.filename is not None:

352 encoding = None if "b" in self._read_flags else "utf-8"

--> 353 with open(self.filename, self._read_flags, encoding=encoding) as f:

354 self.data = f.read()

355 elif self.url is not None:

356 # Deferred import

FileNotFoundError: [Errno 2] No such file or directory: 'neuromatch_ssl_tutorial/images/feat_encoder_schematic.png'

The following code:

Seeds modules that will use random processes, to ensure the results are consistently reproducible, using the

seed_processes()function,Collects the dSprites dataset into a torch dataset using the

data.dSpritesTorchDatasetclass,Initializes a training and a test sampler to keep the two datasets separate using the

data.train_test_splix_idx()function.

# Set the seed before building any dataset/network initializing or training,

# to ensure reproducibility

set_seed(SEED)

# Initialize a torch dataset, specifying the target latent dimension for

# the classifier

dSprites_torchdataset = data.dSpritesTorchDataset(

dSprites,

target_latent="shape"

)

# Initialize a train_sampler and a test_sampler to keep the two sets

# consistently separate

train_sampler, test_sampler = data.train_test_split_idx(

dSprites_torchdataset,

fraction_train=0.8, # 80:20 data split

randst=SEED

)

print(f"Dataset size: {len(train_sampler)} training, "

f"{len(test_sampler)} test images")

Interactive Demo 1.2.1: Training a logistic regression classifier directly on images#

The following code:

trains a logistic regression directly on the training set images to classify their shape, and assesses its performance on the test set images using the

models.train_classifier()function.

Interactive Demo: Try a few different num_epochs settings to see whether performance improves with more training, e.g., between 1 and 50 epochs. (The original setting is num_epochs=25).

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_What_models_Video")

# Call this before any dataset/network initializing or training,

# to ensure reproducibility

set_seed(SEED)

num_epochs = 25 # DEMO: Try different numbers of training epochs

# Train a classifier directly on the images

print("Training a classifier directly on the images...")

_ = models.train_classifier(

encoder=None,

dataset=dSprites_torchdataset,

train_sampler=train_sampler,

test_sampler=test_sampler,

freeze_features=True, # There is no feature encoder to train here, anyway

num_epochs=num_epochs,

verbose=True # Print results

)

As we can observe, the classifier trained directly on the images performs only a bit above chance (39.55%) on the test set, after 25 training epochs.

Shape classification results using different feature encoders:

Chance |

None (raw data) |

|

|---|---|---|

33.33% |

39.55% |

Coding Exercise 1.2.1: Training a logistic regression classifier along with an encoder#

The following code:

Uses the same dSprites torch dataset (

dSprites_torchdataset) initialized above, as well as the training and test samplers (train_sampler,test_sampler),Again, seed modules for substructures that use random processes, to ensure the results are consistently reproducible,

Initializes an encoder network to use in the supervised network using the

models.EncoderCoreclass,Sets a proposed number of epochs to use when training the classifier and encoder (

num_epochs=10).

Exercise: Train a classifier, along with the encoder, to classify the input images according to shape, using models.train_classifier(). How does it perform?

Hints:

models.train_classifier():Is introduced in Interactive Demo 1.2.1.

Takes

freeze_featuresas an input argument:If set to

True, the encoder is frozen, and so only the classifier layer is trained.If set to

False, the encoder is not frozen, and is trained along with the classifier layer.

def train_supervised_encoder(num_epochs, seed):

"""

Helper function to train the encoder in a supervised way

Args:

num_epochs: Integer

Number of epochs the supervised encoder is to be trained for

seed: Integer

The seed value for the dataset/network

Returns:

supervised_encoder: nn.module

The trained encoder with mentioned parameters/hyperparameters

"""

# Call this before any dataset/network initializing or training,

# to ensure reproducibility

set_seed(seed)

# Initialize a core encoder network on which the classifier will be added

supervised_encoder = models.EncoderCore()

#################################################

# Fill in missing code below (...),

# then remove or comment the line below to test your implementation

raise NotImplementedError("Exercise: Train a supervised encoder and classifier.")

#################################################

# Train an encoder and classifier on the images, using models.train_classifier()

print("Training a supervised encoder and classifier...")

_ = models.train_classifier(

encoder=...,

dataset=...,

train_sampler=...,

test_sampler=...,

freeze_features=...,

num_epochs=num_epochs,

verbose=... # print results

)

return supervised_encoder

num_epochs = 10 # Proposed number of training epochs

## Uncomment below to test your function

# supervised_encoder = train_supervised_encoder(num_epochs=num_epochs, seed=SEED)

Network performance after 10 encoder and classifier training epochs (chance: 33.33%):

Training accuracy: 100.00%

Testing accuracy: 98.70%

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Logistic_regression_classifier_Exercise")

When the classifier is trained with an encoder network, however, it achieves very high classification accuracy (~98.70%) on the test set, after only 10 training epochs.

Shape classification results using different feature encoders:

Chance |

None (raw data) |

Supervised |

|

|---|---|---|---|

33.33% |

39.55% |

98.70% |

Section 2: Supervised learning induces invariant representations#

Time estimate: ~20mins

Video 2: Supervised Learning and Invariance#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Supervised_learning_and_invariance_Video")

Section 2.1: Examining Representational Similarity Matrices (RSMs)#

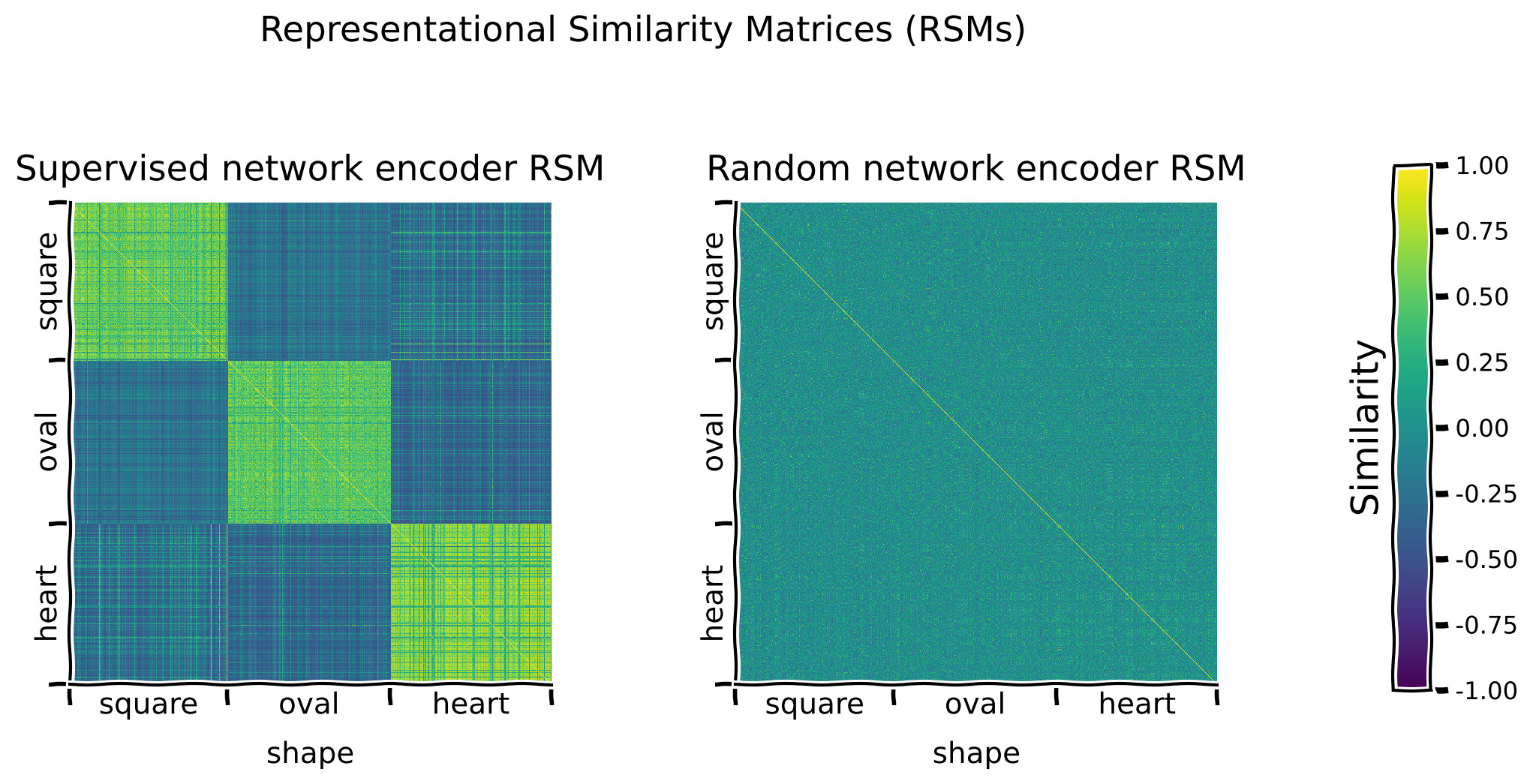

To examine the representations learned by the encoder network, we use Representational Similarity Matrices (RSMs). In these matrices, the similarity between the encoder’s representations of each possible pair of images is plotted to reveal overall structure in representation space.

Note on cosine similarity: Here, we use cosine similarity as a measure of representational similarity. Cosine similarity measures the angle between 2 vectors, and can be thought of as their normalized dot product.

Coding Exercise 2.1.1: Complete a function that calculates RSMs#

The following code:

Lays out the skeleton of a function

custom_torch_RSM_fct()which calculates an RSM from features,Tests the custom function against the solution implementation.

Exercise: Complete the custom_torch_RSM_fct() implementation.

Hints:

custom_torch_RSM_fct():Takes 1 input argument:

features(2D torch Tensor): Feature matrix (nbr items x nbr features)

Returns 1 output:

rsm(2D torch Tensor): Similarity matrix (nbr items x nbr items)

Uses

torch.nn.functional.cosine_similarity().

torch.nn.functional.cosine_similarity():Takes 3 arguments, in order:

x1(torch Tensor),x2(torch Tensor),dim(int)

Returns the similarity between

x1andx2along dimensiondim.

Detailed hint:

To use

torch.nn.functional.cosine_similarity()to measure the similarity offeaturesto itself for each possible pair of items:Pass 2 versions of

featuresasx1andx2, respectively.Ensure that for

x1andx2, the features dimension is at the same position , and specify that dimension withdim.To obtain the similarity between each possible pair of items, ensure that for

x1andx2, the items dimensions are orthogonal to one another (i.e., at different positions).Don’t forget that to achieve this, singleton dimensions (i.e., dimensions of length 1) can be used.

def custom_torch_RSM_fct(features):

"""

Custom function to calculate representational similarity matrix (RSM) of a feature

matrix using pairwise cosine similarity.

Args:

features: 2D torch.Tensor

Feature matrix of size (nbr items x nbr features)

Returns:

rsm: 2D torch.Tensor

Similarity matrix of size (nbr items x nbr items)

"""

num_items, num_features = features.shape

#################################################

# Fill in missing code below (...),

# Complete the function below given the specific guidelines.

# Use torch.nn.functional.cosine_similarity()

# then remove or comment the line below to test your function

raise NotImplementedError("Exercise: Implement RSM calculation.")

#################################################

# EXERCISE: Implement RSM calculation

rsm = ...

if not rsm.shape == (num_items, num_items):

raise ValueError(f"RSM should be of shape ({num_items}, {num_items})")

return rsm

## Test implementation by comparing output to solution implementation

# test_custom_torch_RSM_fct(custom_torch_RSM_fct)

custom_torch_RSM_fct() is correctly implemented.

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Function_that_calculates_RSMs_Exercise")

Interactive Demo 2.1.1: Plotting the supervised network encoder RSM along different latent dimensions#

In this demo, we calculate an RSM for representations of the test set images generated by the supervised network encoder.

The following code:

Calculates and plots the RSM for the test set, with rows and columns sorted by whichever latent dimension is specified (e.g.,

sorting_latent="shape") usingmodels.plot_model_RSMs().

Interactive Demo: In the current example, the rows and columns of the RSM are organized along the shape latent dimension. Try organizing them along one of the other latent dimensions ("scale", "orientation", "posX" or "posY") to see whether different patterns emerge. (The original setting is sorting_latent="shape".)

sorting_latent = "shape" # DEMO: Try sorting by different latent dimensions

print("Plotting RSMs...")

_ = models.plot_model_RSMs(

encoders=[supervised_encoder], # We pass the trained supervised_encoder

dataset=dSprites_torchdataset,

sampler=test_sampler, # We want to see the representations on the held out test set

titles=["Supervised network encoder RSM"], # Plot title

sorting_latent=sorting_latent,

)

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Supervised_network_encoder_RSM_Interactive_Demo")

Discussion 2.1.1: What patterns do the RSMs reveal about how the encoder represents different images?#

A. What does the yellow (maximal similarity color) diagonal, going from the top left to the bottom right, correspond to? B. What pattern can be observed when comparing RSM values for pairs of images that share a similar latent value (e.g., 2 heart images) vs pairs of images that do not (e.g., a heart and a square image)? C. Do some shapes appear to be encoded more similarly than others? D. Do some latent dimensions show clearer RSM patterns than others? Why might that be so?

Supporting images for Discussion response examples for 2.1.1#

Show code cell source

# @markdown #### Supporting images for Discussion response examples for 2.1.1

Image(filename=os.path.join(REPO_PATH, "images", "rsms_supervised_encoder_10ep_bs1000_seed2021.png"), width=1200)

---------------------------------------------------------------------------

FileNotFoundError Traceback (most recent call last)

Cell In[31], line 2

1 # @markdown #### Supporting images for Discussion response examples for 2.1.1

----> 2 Image(filename=os.path.join(REPO_PATH, "images", "rsms_supervised_encoder_10ep_bs1000_seed2021.png"), width=1200)

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/IPython/core/display.py:970, in Image.__init__(self, data, url, filename, format, embed, width, height, retina, unconfined, metadata, alt)

968 self.unconfined = unconfined

969 self.alt = alt

--> 970 super(Image, self).__init__(data=data, url=url, filename=filename,

971 metadata=metadata)

973 if self.width is None and self.metadata.get('width', {}):

974 self.width = metadata['width']

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/IPython/core/display.py:327, in DisplayObject.__init__(self, data, url, filename, metadata)

324 elif self.metadata is None:

325 self.metadata = {}

--> 327 self.reload()

328 self._check_data()

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/IPython/core/display.py:1005, in Image.reload(self)

1003 """Reload the raw data from file or URL."""

1004 if self.embed:

-> 1005 super(Image,self).reload()

1006 if self.retina:

1007 self._retina_shape()

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/IPython/core/display.py:353, in DisplayObject.reload(self)

351 if self.filename is not None:

352 encoding = None if "b" in self._read_flags else "utf-8"

--> 353 with open(self.filename, self._read_flags, encoding=encoding) as f:

354 self.data = f.read()

355 elif self.url is not None:

356 # Deferred import

FileNotFoundError: [Errno 2] No such file or directory: 'neuromatch_ssl_tutorial/images/rsms_supervised_encoder_10ep_bs1000_seed2021.png'

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_What_patterns_do_the_RSMs_reveal_Discussion")

Section 3: Random projections don’t work as well#

Time estimate: ~20mins

Video 3: Random Representations#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Random_representations_Video")

Section 3.1: Examining RSMs of a random encoder#

To determine whether the patterns observed in the RSMs of the supervised network encoder are trivial, we investigate whether they also emerge from the random projections of an untrained encoder.

Coding Exercise 3.1.1: Plotting a random network encoder RSM along different latent dimensions#

In this exercise, we repeat the same analysis as in Section 2.1, but with a random encoder.

The following code:

Initializes an encoder network to use in the random network using the

models.EncoderCoreclass,Proposes a latent dimension along which to sort the rows and columns (

sorting_latent="shape").

Exercise:

Visualize the RSMs for the supervised and random network encoders, using

models.plot_model_RSMs().Visualize the RSMs, organized along different latent dimensions (

"scale","orientation","posX"or"posY"), and compare the patterns observed for the supervised versus the random encoder network.

Hint: models.plot_model_RSMs() is introduced in Interactive Demo 2.1.1.

def plot_rsms(seed):

"""

Helper function to plot Representational Similarity Matrices (RSMs)

Args:

seed: Integer

The seed value for the dataset/network

Returns:

random_encoder: nn.module

The encoder with mentioned parameters/hyperparameters

"""

# Call this before any dataset/network initializing or training,

# to ensure reproducibility

set_seed(seed)

# Initialize a core encoder network that will not get trained

random_encoder = models.EncoderCore()

# Try sorting by different latent dimensions

sorting_latent = "shape"

#################################################

# Fill in missing code below (...),

# then remove or comment the line below to test your implementation

raise NotImplementedError("Exercise: Plot RSMs.")

#################################################

# Plot RSMs

print("Plotting RSMs...")

_ = models.plot_model_RSMs(

encoders=[..., ...], # Pass both encoders

dataset=...,

sampler=..., # To see the representations on the held out test set

titles=["Supervised network encoder RSM",

"Random network encoder RSM"], # Plot titles

sorting_latent=sorting_latent,

)

return random_encoder

## Uncomment below to test your function

# random_encoder = plot_rsms(seed=SEED)

Example output:

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Plotting_a_random_network_encoder_Exercise")

Discussion 3.1.1: What does comparing these RSMs reveal about the potential value of trained versus random encoder representations?#

A. What patterns, if any, are visible in the random network encoder RSM? B. Which encoder network is most likely to produce meaningful representations?

Supporting images for Discussion response examples for 3.1.1: All random encoder RSMs#

Show code cell source

# @markdown #### Supporting images for Discussion response examples for 3.1.1: All random encoder RSMs

Image(filename=os.path.join(REPO_PATH, "images", "rsms_random_encoder_0ep_bs0_seed2021.png"), width=1000)

---------------------------------------------------------------------------

FileNotFoundError Traceback (most recent call last)

Cell In[37], line 2

1 # @markdown #### Supporting images for Discussion response examples for 3.1.1: All random encoder RSMs

----> 2 Image(filename=os.path.join(REPO_PATH, "images", "rsms_random_encoder_0ep_bs0_seed2021.png"), width=1000)

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/IPython/core/display.py:970, in Image.__init__(self, data, url, filename, format, embed, width, height, retina, unconfined, metadata, alt)

968 self.unconfined = unconfined

969 self.alt = alt

--> 970 super(Image, self).__init__(data=data, url=url, filename=filename,

971 metadata=metadata)

973 if self.width is None and self.metadata.get('width', {}):

974 self.width = metadata['width']

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/IPython/core/display.py:327, in DisplayObject.__init__(self, data, url, filename, metadata)

324 elif self.metadata is None:

325 self.metadata = {}

--> 327 self.reload()

328 self._check_data()

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/IPython/core/display.py:1005, in Image.reload(self)

1003 """Reload the raw data from file or URL."""

1004 if self.embed:

-> 1005 super(Image,self).reload()

1006 if self.retina:

1007 self._retina_shape()

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/IPython/core/display.py:353, in DisplayObject.reload(self)

351 if self.filename is not None:

352 encoding = None if "b" in self._read_flags else "utf-8"

--> 353 with open(self.filename, self._read_flags, encoding=encoding) as f:

354 self.data = f.read()

355 elif self.url is not None:

356 # Deferred import

FileNotFoundError: [Errno 2] No such file or directory: 'neuromatch_ssl_tutorial/images/rsms_random_encoder_0ep_bs0_seed2021.png'

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Trained_vs_Random_encoder_Discussion")

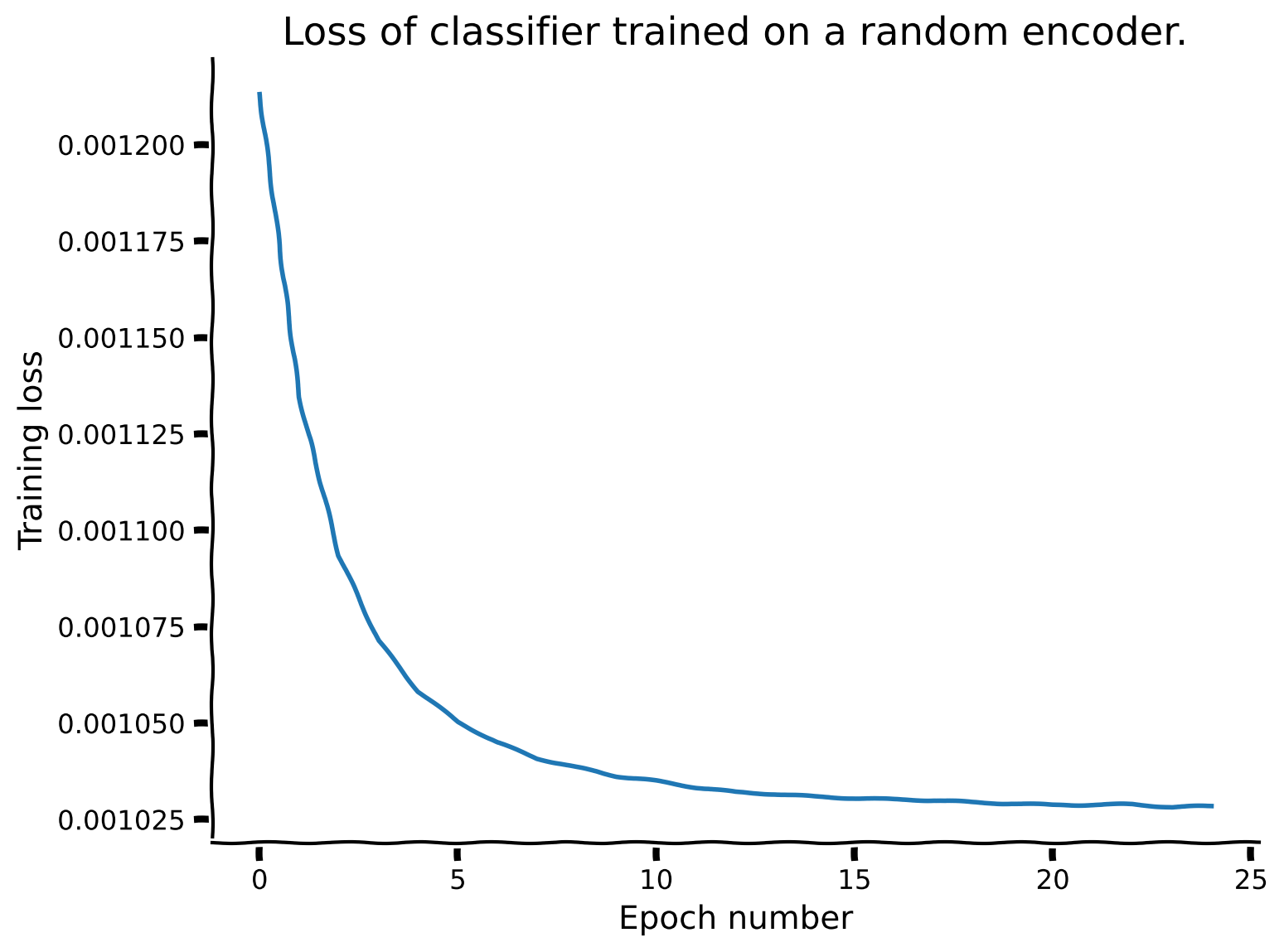

Coding Exercise 3.1.2: Evaluating the classification performance of a logistic regression trained on the representations produced by a random network encoder#

In this exercise, we repeat a similar analysis to Section 1.2, but with the random encoder network. Importantly, this time, the encoder parameters must stay frozen during training by setting freeze_features=True. Instead of being provided ahead of time a suggestion for a reasonable number of training epochs, we use the training loss array to select a good value.

The following code:

Trains a logistic regression on top of the random encoder network to classify images based on shape, and assesses its performance on the test set images using

models.train_classifier()withfreeze_features=Trueto ensure that the encoder is not trained, and only the classifier is.

Exercise:

Set a number of epochs for which to train the classifier.

Plot the training loss array (

random_loss_array, i.e., training loss at each epoch) returned when training the model.Rerun the classifier if more training epochs are needed based on the progression of the training loss.

def plot_loss(num_epochs, seed):

"""

Helper function to plot the loss function of the random-encoder

Args:

num_epochs: Integer

Number of the epochs the random encoder is to be trained for

seed: Integer

The seed value for the dataset/network

Returns:

random_loss_array: List

Loss per epoch

"""

# Call this before any dataset/network initializing or training,

# to ensure reproducibility

set_seed(seed)

# Train classifier on the randomly encoded images

print("Training a classifier on the random encoder representations...")

_, random_loss_array, _, _ = models.train_classifier(

encoder=random_encoder,

dataset=dSprites_torchdataset,

train_sampler=train_sampler,

test_sampler=test_sampler,

freeze_features=True, # Keep the encoder frozen while training the classifier

num_epochs=num_epochs,

verbose=True # Print results

)

#################################################

# Fill in missing code below (...),

# then remove or comment the line below to test your implementation

raise NotImplementedError("Exercise: Plot loss array.")

#################################################

# Plot the loss array

fig, ax = plt.subplots()

ax.plot(...)

ax.set_title(...)

ax.set_xlabel(...)

ax.set_ylabel(...)

return random_loss_array

## Set a reasonable number of training epochs

num_epochs = 25

## Uncomment below to test your plot

# random_loss_array = plot_loss(num_epochs=num_epochs, seed=SEED)

Network performance after 25 classifier training epochs (chance: 33.33%):

Training accuracy: 46.02%

Testing accuracy: 44.67%

Example output:

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Evaluating_the_classification_performance_Exercise")

The network loss training is fairly stable by 25 epochs, at which point the classifier performs at 44.67% accuracy on the test dataset.

Shape classification results using different feature encoders:

Chance |

None (raw data) |

Supervised |

Random |

|

|---|---|---|---|---|

33.33% |

39.55% |

98.70% |

44.67% |

Discussion 3.1.2: What can we conclude about the potential consequences of using random projections with a dataset like dSprites?#

A. How does the classifier performance compare to the classifier trained directly on the images? B. How does the classifier performance compare to the classifier trained along with the encoder (supervised encoder)? C. What explains these different performances?

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Random_projections_with_dSprites_Discussion")

Section 4: Generative approaches to representation learning can fail#

Time estimate: ~30mins

Video 4: Generative models#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Generative_models_Video")

Section 4.1: Examining the RSMs of a Variational Autoencoder#

We next ask - What kind of representations a network can learn in the absence of labelled data? To answer this question, we first look at a generative model, namely the Variational Autoencoder (VAE).

Given that generative models typically require more training than supervised models, instead of pre-training a network here, we will load one that was pre-trained for 300 epochs. Importantly, the encoder shares the same architecture as the one used for the supervised and random examples above.

The following code:

Loads the parameters of a full Variational AutoEncoder (VAE) network (encoder and decoder) pre-trained on the generative task of reconstructing the input images, under the Kullback–Leibler Divergence (KLD) minimization constraint over the latent space that characterizes VAEs, using

load.load_encoder()andload.load_decoder().

# Call this before any dataset/network initializing or training,

# to ensure reproducibility

set_seed(SEED)

# Load VAE encoder and decoder pre-trained on the reconstruction and KLD tasks

vae_encoder = load.load_encoder(REPO_PATH, model_type="vae")

vae_decoder = load.load_vae_decoder(REPO_PATH)

Interactive Demo 4.1.1: Plotting example reconstructions using the pre-trained VAE encoder and decoder#

In this demo, we sample images from the test set, and take a look at the quality of the reconstructions using models.plot_vae_reconstructions().

Interactive Demo: Try plotting different images from the test dataset by selecting different test_sampler.indices values. (Original setting is indices=test_sampler.indices[:10].)

models.plot_vae_reconstructions(

vae_encoder, # Pre-trained encoder

vae_decoder, # Pre-trained decoder

dataset=dSprites_torchdataset,

indices=test_sampler.indices[:10], # DEMO: Select different indices to plot from the test set

title="VAE test set image reconstructions",

)

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Pretrained_VAE_Interactive_Demo")

Discussion 4.1.1: How does the VAE perform on the reconstruction task?#

A. Which latent features does the network appear to preserve well, and which does it preserve less well? B. Based on the reconstruction performance, what do you expect to see in the different RSMs?

Note on reconstruction quality: This VAE network uses a basic VAE loss with a convolutional encoder (our core encoder network), and a deconvolutional decoder. This can lead to some blurriness in the reconstructed shapes which a more sophisticated VAE could overcome.

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_VAE_on_the_reconstruction_task_Discussion")

Interactive Demo 4.1.2: Visualizing the VAE encoder RSMs, organized along different latent dimensions#

We will now compare the pre-trained VAE encoder network RSM to the previously generated encoder RSMs.

Interactive Demo: Visualize the RSMs, organized along different latent dimensions ("scale", "orientation", "posX" or "posY"), and compare the patterns observed for the different encoder networks. (The original setting is sorting_latent="shape".)

sorting_latent = "shape" # DEMO: Try sorting by different latent dimensions

print("Plotting RSMs...")

_ = models.plot_model_RSMs(

encoders=[supervised_encoder, random_encoder, vae_encoder], # Pass all three encoders

dataset=dSprites_torchdataset,

sampler=test_sampler, # To see the representations on the held out test set

titles=["Supervised network encoder RSM", "Random network encoder RSM",

"VAE network encoder RSM"], # Plot titles

sorting_latent=sorting_latent,

)

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_VAE_encoder_RSMs_Interactive_Demo")

Discussion 4.1.2: What can we conclude about the the ability of generative models like VAEs to construct a meaningful representation space?#

A. What structure can be observed in the pre-trained VAE encoder RSMs when sorted along the different latent dimensions, and what does that suggest about the feature space learned by the VAE encoder? B. How do the pre-trained VAE encoder RSMs compare to the supervised and random encoder network RSMs? C. What explains these different RSMs? D. How well will the pre-trained VAE encoder likely perform on the shape classification task, as compared to the other encoder networks? E. Might the pre-trained VAE encoder be better suited to predicting a different latent dimension?

Supporting images for Discussion response examples for 4.1.2: All VAE encoder RSMs#

Show code cell source

# @markdown #### Supporting images for Discussion response examples for 4.1.2: All VAE encoder RSMs

Image(filename=os.path.join(REPO_PATH, "images", "rsms_vae_encoder_300ep_bs500_seed2021.png"), width=1000)

---------------------------------------------------------------------------

FileNotFoundError Traceback (most recent call last)

Cell In[50], line 2

1 # @markdown #### Supporting images for Discussion response examples for 4.1.2: All VAE encoder RSMs

----> 2 Image(filename=os.path.join(REPO_PATH, "images", "rsms_vae_encoder_300ep_bs500_seed2021.png"), width=1000)

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/IPython/core/display.py:970, in Image.__init__(self, data, url, filename, format, embed, width, height, retina, unconfined, metadata, alt)

968 self.unconfined = unconfined

969 self.alt = alt

--> 970 super(Image, self).__init__(data=data, url=url, filename=filename,

971 metadata=metadata)

973 if self.width is None and self.metadata.get('width', {}):

974 self.width = metadata['width']

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/IPython/core/display.py:327, in DisplayObject.__init__(self, data, url, filename, metadata)

324 elif self.metadata is None:

325 self.metadata = {}

--> 327 self.reload()

328 self._check_data()

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/IPython/core/display.py:1005, in Image.reload(self)

1003 """Reload the raw data from file or URL."""

1004 if self.embed:

-> 1005 super(Image,self).reload()

1006 if self.retina:

1007 self._retina_shape()

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/IPython/core/display.py:353, in DisplayObject.reload(self)

351 if self.filename is not None:

352 encoding = None if "b" in self._read_flags else "utf-8"

--> 353 with open(self.filename, self._read_flags, encoding=encoding) as f:

354 self.data = f.read()

355 elif self.url is not None:

356 # Deferred import

FileNotFoundError: [Errno 2] No such file or directory: 'neuromatch_ssl_tutorial/images/rsms_vae_encoder_300ep_bs500_seed2021.png'

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Construct_a_meaningful_representation_space_Discussion")

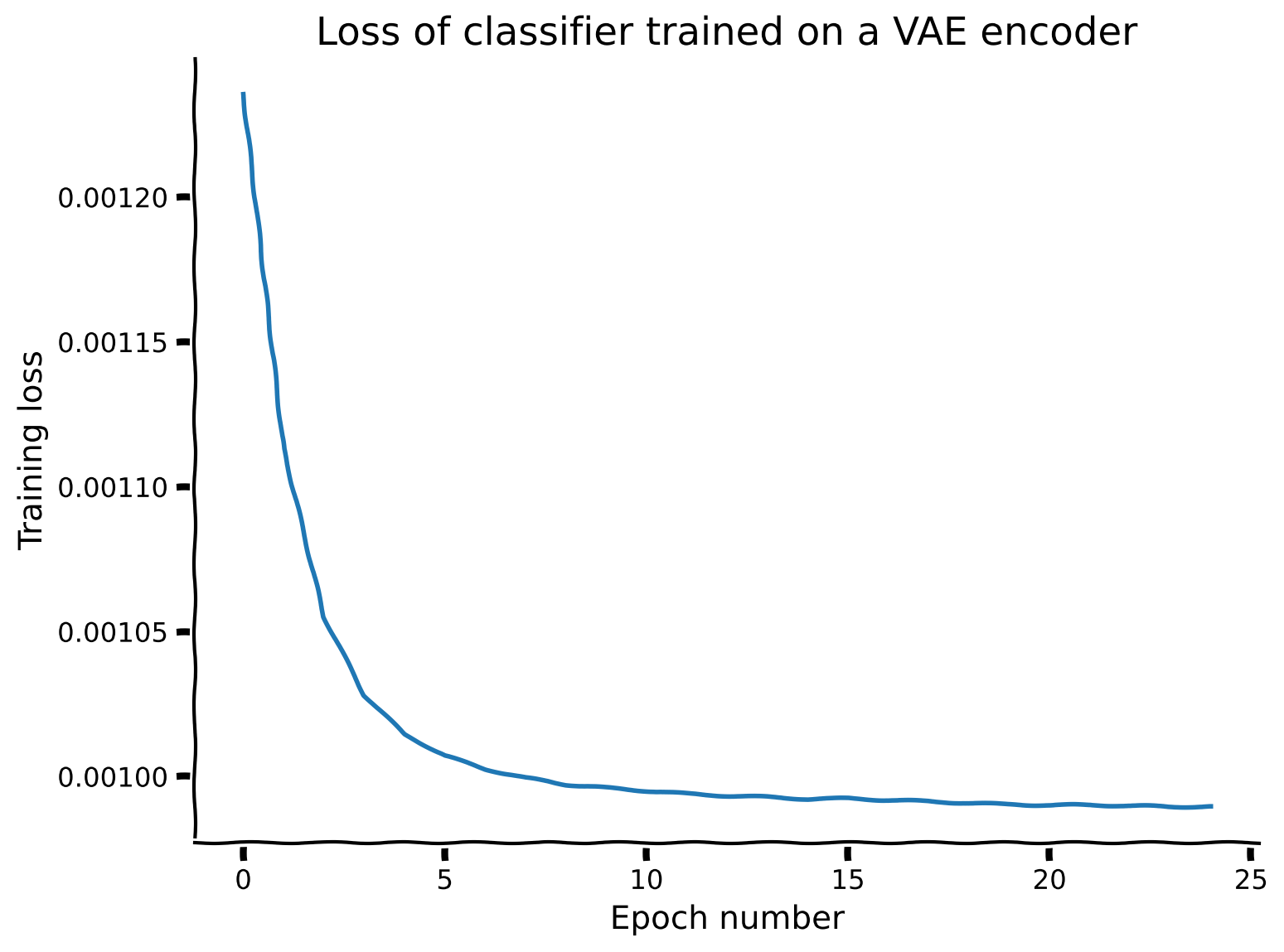

Coding Exercise 4.1.2: Evaluating the classification performance of a logistic regression trained on the representations produced by the pre-trained VAE network encoder#

For the pre-trained VAE encoder, as the encoder parameters have already been trained, they should be kept frozen while the classifier is trained by setting freeze_features=True.

Exercise:

Set a number of epochs for which to train the classifier.

Train a classifier, along with the encoder, to classify the input images according to shape, using `models.train_classifier()`.

Plot the loss array returned when training the model, and update the number of training epochs, if needed.

Hint: models.train_classifier() is introduced in Interactive Demo 1.2.1.

def vae_train_loss(num_epochs, seed):

"""

Helper function to plot the train loss of the variational autoencoder (VAE)

Args:

num_epochs: Integer

Number of the epochs the VAE is to be trained for

seed: Integer

The seed value for the dataset/network

Returns:

vae_loss_array: List

Loss per epoch

"""

# Call this before any dataset/network initializing or training,

# to ensure reproducibility

set_seed(seed)

#################################################

# Fill in missing code below (...),

# then remove or comment the line below to test your implementation

raise NotImplementedError("Exercise: Train a classifer on the pre-trained VAE encoder representations.")

#################################################

# Train an encoder and classifier on the images, using models.train_classifier()

print("Training a classifier on the pre-trained VAE encoder representations...")

_, vae_loss_array, _, _ = models.train_classifier(

encoder=...,

dataset=...,

train_sampler=...,

test_sampler=...,

freeze_features=..., # Keep the encoder frozen while training the classifier

num_epochs=...,

verbose=... # Print results

)

#################################################

# Fill in missing code below (...),

# then remove or comment the line below to test your implementation

raise NotImplementedError("Exercise: Plot the VAE classifier training loss.")

#################################################

# Plot the VAE classifier training loss.

fig, ax = plt.subplots()

ax.plot(...)

ax.set_title(...)

ax.set_xlabel(...)

ax.set_ylabel(...)

return vae_loss_array

# Set a reasonable number of training epochs

num_epochs = 25

## Uncomment below to test your function

# vae_loss_array = vae_train_loss(num_epochs=num_epochs, seed=SEED)

Network performance after 25 classifier training epochs (chance: 33.33%):

Training accuracy: 46.48%

Testing accuracy: 45.75%

Example output:

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Evaluate_performance_using_pretrained_VAE_Exercise")

The network loss training is fairly stable by 25 epochs, at which point the classifier performs at 45.75% accuracy on the test dataset.

Shape classification results using different feature encoders:

Chance |

None (raw data) |

Supervised |

Random |

VAE |

|

|---|---|---|---|---|---|

33.33% |

39.55% |

98.70% |

44.67% |

45.75% |

Section 5: The modern approach to self-supervised training for invariance#

Time estimate: ~10mins

Video 5: Modern Approach in Self-supervised Learning#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Modern_approach_in_Selfsupervised_Learning_Video")

Section 5.1: Examining different options for learning invariant representations.#

We now take a look at a few options for learning invariant shape representations for a dataset such as dSprites.

Interactive Demo 5.1.1: Visualizing a few different image transformations available that could be used to learn invariance#

The following code:

Initializes a set of transforms called

invariance_transformsusing thetorchvision.transforms.RandomAffineclass,Collects the dSprites dataset into a torch dataset

dSprites_invariance_torchdatasetwhich takes theinvariance_transformsas input and deploys the transforms when it is called,Shows a few examples of images and their transformed versions using the

data.dSpritesTorchDatasetshow_images()method.

The torchvision.transforms.RandomAffine class enables us to predetermine which types and ranges of transforms will be sampled from when transforming the images, by setting the following arguments:

degrees: Absolute maximum number of degrees to rotatetranslate: Absolute maximum proportion of width to shift in x, and of height to shift in yscale: Minimum to maximum scaling factor

Interactive Demo: Try out a few combinations of the transformation parameters, and visualize the pairs of transformations of the same image. (The original settings are degrees=90, translate=(0.2, 0.2), scale=(0.8, 1.2).)

# Call this before any dataset/network initializing or training,

# to ensure reproducibility

set_seed(SEED)

# DEMO: Try some random affine data augmentations combinations to apply to the images

invariance_transforms = torchvision.transforms.RandomAffine(

degrees=90,

translate=(0.2, 0.2), # (in x, in y)

scale=(0.8, 1.2) # min to max scaling

)

# Initialize a simclr-specific torch dataset

dSprites_invariance_torchdataset = data.dSpritesTorchDataset(

dSprites,

target_latent="shape",

simclr=True,

simclr_transforms=invariance_transforms

)

# Show a few example of pairs of image augmentations

_ = dSprites_invariance_torchdataset.show_images(randst=SEED)

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Image_transformations_Interactive_Demo")

Section 6: How to train for invariance to transformations with a target network#

Time estimate: ~40mins

Video 6: Data Transformations#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Data_Transformations_Video")

Section 6.1: Using image transformations to learn feature invariant representations in a Self-supervised Learning (SSL) network.#

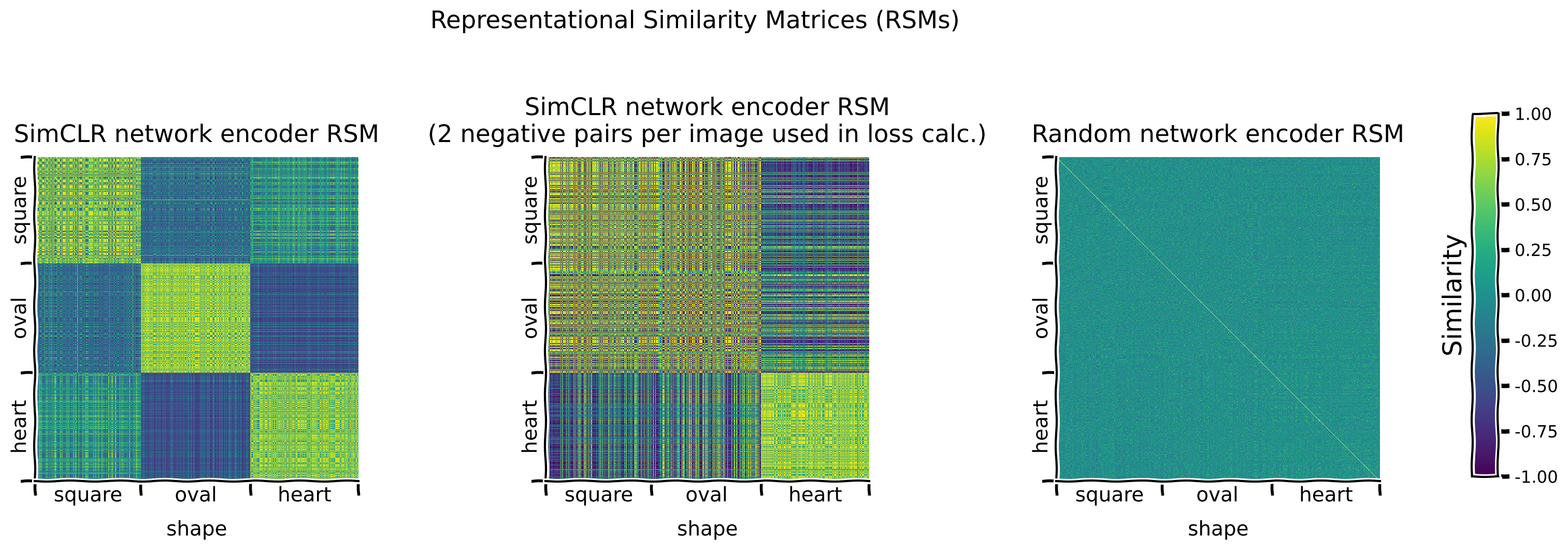

We will now investigate the effects of selecting certain transformations compared to others on the invariance learned by an encoder network trained with a specific type of SSL algorithm, namely SimCLR. Specifically, we will observe how pre-training an encoder network with SimCLR affects the performance of a classifier trained on the representations the network has learned.

Coding Exercise 6.1.1: Complete a SimCLR loss function#

The following code:

Lays out the skeleton of a function

custom_simclr_contrastive_loss()which calculates the contrastive loss for a SimCLR network,Tests the custom function against the solution implementation,

Trains SimCLR for a few epochs.

Exercise:

Complete the

custom_simclr_contrastive_loss()implementation,Plot the loss after training SimCLR with the custom loss function for a few epochs.

Detailed hint:

custom_simclr_contrastive_loss():Takes 2 input arguments:

proj_feat1(2D torch Tensor): Projected features for first image augmentations (batch_size x feat_size)proj_feat2(2D torch Tensor): Projected features for second image augmentations (batch_size x feat_size)

Computes the

similarity_matrixfor all possible pairs of image augmentations.Identifies positive and negative sample indicators for indexing the

similarity_matrix:pos_sample_indicators(2D torch Tensor): Tensor indicating the positions of positive image pairs with 1s (and 0s in all other positions). (batch_size * 2 x batch_size * 2)neg_sample_indicators(2D torch Tensor): Tensor indicating the positions of negative image pairs with 1s (and 0s in all other positions). (batch_size * 2 x batch_size * 2)

Computes the 2 parts of the contrastive loss, retrieving the relevant values from the

similarity_matrixusing the indicators:numerator: Calculated from thesimilarity_matrixvalues for positive pairs.denominator: Calculated from thesimilarity_matrixvalues for negative pairs.

def custom_simclr_contrastive_loss(proj_feat1, proj_feat2, temperature=0.5):

"""

Returns contrastive loss, given sets of projected features, with positive

pairs matched along the batch dimension.

Args:

Required:

proj_feat1: 2D torch.Tensor

Projected features for first image with augmentations (size: batch_size x feat_size)

proj_feat2: 2D torch.Tensor

Projected features for second image with augmentations (size: batch_size x feat_size)

Optional:

temperature: Float

relaxation temperature (default: 0.5)

l2 normalization along with temperature effectively weights different

examples, and an appropriate temperature can help the model learn from hard negatives.

Returns:

loss: Float

Mean contrastive loss

"""

device = proj_feat1.device

if len(proj_feat1) != len(proj_feat2):

raise ValueError(f"Batch dimension of proj_feat1 ({len(proj_feat1)}) "

f"and proj_feat2 ({len(proj_feat2)}) should be same")

batch_size = len(proj_feat1) # N

z1 = torch.nn.functional.normalize(proj_feat1, dim=1)

z2 = torch.nn.functional.normalize(proj_feat2, dim=1)

proj_features = torch.cat([z1, z2], dim=0) # 2N x projected feature dimension

similarity_matrix = torch.nn.functional.cosine_similarity(

proj_features.unsqueeze(1), proj_features.unsqueeze(0), dim=2

) # dim: 2N x 2N

# Initialize arrays to identify sets of positive and negative examples, of

# shape (batch_size * 2, batch_size * 2), and where

# 0 indicates that 2 images are NOT a pair (either positive or negative, depending on the indicator type)

# 1 indices that 2 images ARE a pair (either positive or negative, depending on the indicator type)

pos_sample_indicators = torch.roll(torch.eye(2 * batch_size), batch_size, 1).to(device)

neg_sample_indicators = (torch.ones(2 * batch_size) - torch.eye(2 * batch_size)).to(device)

#################################################

# Fill in missing code below (...),

# then remove or comment the line below to test your function

raise NotImplementedError("Exercise: Implement SimCLR loss.")

#################################################

# Implement the SimClr loss calculation

# Calculate the numerator of the Loss expression by selecting the appropriate elements from similarity_matrix.

# Use the pos_sample_indicators tensor

numerator = ...

# Calculate the denominator of the Loss expression by selecting the appropriate elements from similarity_matrix,

# and summing over pairs for each item.

# Use the neg_sample_indicators tensor

denominator = ...

if (denominator < 1e-8).any(): # Clamp to avoid division by 0

denominator = torch.clamp(denominator, 1e-8)

loss = torch.mean(-torch.log(numerator / denominator))

return loss

## Uncomment below to test your function

# test_custom_contrastive_loss_fct(custom_simclr_contrastive_loss)

custom_simclr_contrastive_loss() is correctly implemented.

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_SimCLR_loss_function_Exercise")

We can now train the SimCLR encoder with the custom contrastive loss for a few epochs.

# Call this before any dataset/network initializing or training,

# to ensure reproducibility

set_seed(SEED)

# Train SimCLR for a few epochs

print("Training a SimCLR encoder with the custom contrastive loss...")

num_epochs = 5

_, test_simclr_loss_array = models.train_simclr(

encoder=models.EncoderCore(),

dataset=dSprites_invariance_torchdataset,

train_sampler=train_sampler,

num_epochs=num_epochs,

loss_fct=custom_simclr_contrastive_loss

)

# Plot SimCLR loss over a few epochs.

fig, ax = plt.subplots()

ax.plot(test_simclr_loss_array)

ax.set_title("SimCLR network loss")

ax.set_xlabel("Epoch number")

_ = ax.set_ylabel("Training loss")

Given that self-supervised models typically require more training than supervised models, instead of fully pre-training a network here, we will load one that was pre-trained for 60 epochs. Again, the encoder shares the same architecture as the one used for the supervised, random and VAE examples above.

The following code:

Loads the parameters of a SimCLR network pre-trained on the SimCLR contrastive task using

load.load_encoder().

# Load SimCLR encoder pre-trained on the contrastive loss

simclr_encoder = load.load_encoder(REPO_PATH, model_type="simclr")

Interactive Demo 6.1.1: Evaluating the classification performance of a logistic regression trained on the representations produced by a SimCLR network encoder that was pre-trained using different image transformations#

For the pre-trained SimCLR encoder, as with the VAE encoder, as the encoder parameters have already been trained, they should be kept frozen while the classifier is trained by setting freeze_features=True.

We train and test with dSprites_torch dataset instead of dSprites_invariance_torch dataset, as we are interested in the classifier performance on the real dSprites images, and not their augmentations.

Interactive Demo: Try different numbers of epochs for which to train the classifier. (The original setting is num_epochs=10.)

# Call this before any dataset/network initializing or training,

# to ensure reproducibility

set_seed(SEED)

print("Training a classifier on the pre-trained SimCLR encoder representations...")

_, simclr_loss_array, _, _ = models.train_classifier(

encoder=simclr_encoder,

dataset=dSprites_torchdataset,

train_sampler=train_sampler,

test_sampler=test_sampler,

freeze_features=True, # Keep the encoder frozen while training the classifier

num_epochs=10, # DEMO: Try different numbers of epochs

verbose=True

)

fig, ax = plt.subplots()

ax.plot(simclr_loss_array)

ax.set_title("Loss of classifier trained on a SimCLR encoder.")

ax.set_xlabel("Epoch number")

_ = ax.set_ylabel("Training loss")

Network performance after 10 classifier training epochs (chance: 33.33%):

Training accuracy: 97.83%

Testing accuracy: 97.53%

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Evaluate_performance_using_pretrained_SimCLR_Interactive_Demo")

The network (using the transforms proposed above) performs at 97.53% accuracy on the test dataset, after 15 classifier training epochs.

Shape classification results using different feature encoders:

Chance |

None (raw data) |

Supervised |

Random |

VAE |

SimCLR |

|

|---|---|---|---|---|---|---|

33.33% |

39.55% |

98.70% |

44.67% |

45.75% |

97.53% |

Section 7: Ethical considerations for self-supervised learning from biased datasets#

Video 7: Un/Self-Supervised Learning#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Un_self_supervised_learning_Video")

Section 7.1: The consequences of training models on biased datasets#

If a model is trained on a biased dataset, it is likely to learn a representational encoding that reproduces these biases, impairing its ability to generalize properly and increasing the likelihood that it will propagate these biases forward.

Here, we investigate the effects of training the models on a biased subset of the training dataset. Specifically, we introduce a train_sampler_biased, a training dataset sampler that only samples:

Squares, if they are centered on the lefthand side of an image (posX: 0 to 0.3),

Ovals, if they are centered in the center of an image (posX: 0.35 to 0.65),

Hearts, if they are centered on the righthand side of am image (posX: 0.7 to 1.0).

This sampling bias introduces a correlation between shape and posX that does not exist in the original dataset.

We then train each model as above on the dataset, and observe their performance when tested on an unbiased dataset.

Note on dataset size: This biased sampling also significantly reduces the size of the training dataset available (approximately 6x). Thus, it would not be fair to compare our results here to those obtained previously in the tutorial, when we were using the full dataset. For this reason, as a control, we will also separately train the models with train_sampler_bias_ctrl, a training dataset sampler that does not share the same sampling bias as train_sampler_biased, but can only sample as many samples as train_sampler_biased can.

# Call this before any dataset/network initializing or training,

# to ensure reproducibility

set_seed(SEED)

bias_type = "shape_posX_spaced" # Name of bias

# Initialize a biased training sampler and an unbiased test sampler

train_sampler_biased, test_sampler_for_biased = data.train_test_split_idx(

dSprites_torchdataset,

fraction_train=0.95, # 95:5 Split to partially compensate for loss of training examples due to bias

randst=SEED,

train_bias=bias_type

)

# Initialize a control, unbiased training sampler and an unbiased test sampler

train_sampler_bias_ctrl, test_sampler_for_bias_ctrl = data.train_test_split_idx(

dSprites_torchdataset,

fraction_train=0.95,

randst=SEED,

train_bias=bias_type,

control=True

)

print(f"Biased dataset: {len(train_sampler_biased)} training, "

f"{len(test_sampler_for_biased)} test images")

print(f"Bias control dataset: {len(train_sampler_bias_ctrl)} training, "

f"{len(test_sampler_for_bias_ctrl)} test images")

We plot some images sampled with train_sampler_biased to observe the pattern described above where shape and posX are now correlated.

To better visualize the bias introduced, we will plot them with annotations that show, in red:

The edges of each of the 3

posXsections, andThe center, i.e.

(posX, posY), for each shape.

print("Plotting first 20 images from the biased training dataset.\n")

dSprites.show_images(indices=train_sampler_biased.indices[:20], annotations="posX_quadrants")

We also plot some images sampled with train_sampler_bias_ctrl to verify visually that this biased pattern does not appear in the control dataset.

Again, the annotations are added, purely for visualization purposes.

print("Plotting sample images from the bias control training dataset.\n")

dSprites.show_images(indices=train_sampler_bias_ctrl.indices[:20], annotations="posX_quadrants")

Show code cell source

# @markdown ### Function to run full training procedure

# @markdown (from initializing and pretraining encoders to training classifiers):

# @markdown `full_training_procedure(train_sampler, test_sampler)`

def full_training_procedure(train_sampler, test_sampler, title=None,

dataset_type="biased", verbose=True):

"""

Funtion to load pretrained VAE and SimCLR encoders

Args:

train_sampler: torch.Tensor

Training Data

test_sampler: torch.Tensor

Test Data

title: String

Title

dataset_type: String

Specifies if the expected model type is biased/bias-controlled

verbose: Boolean

If true, the shell shows all lines in the script in execution

Returns:

Nothing

"""

if dataset_type not in ["biased", "bias_ctrl"]:

raise ValueError("Expected model_type to be 'biased' or 'bias_ctrl', "

f"but found {model_type}.")

supervised_encoder = models.EncoderCore()

random_encoder = models.EncoderCore()

# Load pre-trained VAE

vae_encoder = load.load_encoder(

REPO_PATH, model_type="vae", dataset_type=dataset_type,

verbose=verbose

)

# Load pre-trained SimCLR encoder

simclr_encoder = load.load_encoder(

REPO_PATH, model_type="simclr", dataset_type=dataset_type,

verbose=verbose

)

encoders = [supervised_encoder, random_encoder, vae_encoder, simclr_encoder]

freeze_features = [False, True, True, True]

encoder_labels = ["supervised", "random", "VAE", "SimCLR"]

num_clf_epochs = [80, 30, 30, 30]

print(f"\nTraining supervised encoder and classifier for {num_clf_epochs[0]} "

f"epochs, and all other classifiers for {num_clf_epochs[1]} epochs each.")

_ = models.train_encoder_clfs_by_fraction_labelled(

encoders=encoders,

dataset=dSprites_torchdataset,

train_sampler=train_sampler,

test_sampler=test_sampler,

num_epochs=num_clf_epochs,

freeze_features=freeze_features,

subset_seed=SEED,

encoder_labels=encoder_labels,

title=title,

verbose=verbose

)

Here, we use a biased training data sampler (and unbiased control sampler) to observe how the different models perform. Because the dataset is much smaller, we increase the number of pre-trained and training epochs for the encoders and classifiers.

Let us start with our unbiased control sampler, to get a sense of the classification performance levels we should expect with a dataset this size.

# Call this before any dataset/network initializing or training,

# to ensure reproducibility

set_seed(SEED)

print("Training all models using the control, unbiased training dataset\n")

full_training_procedure(

train_sampler_bias_ctrl, test_sampler_for_bias_ctrl,

title="Classifier performances with control, unbiased training dataset",

dataset_type="bias_ctrl" # For loading correct pre-trained networks

)

A similar pattern is observed here as with the full dataset, though notably most performances are a bit weaker, likely due to us (A) using a smaller training dataset, and (B) training and pre-training for fewer iterations, considering the dataset size, for time-efficiency reasons.

Using the same parameters, we now repeat the analysis with the biased training data sampler.

# Call this before any dataset/network initializing or training,

# to ensure reproducibility

set_seed(SEED)

print("Training all models using the biased training dataset\n")

full_training_procedure(

train_sampler_biased, test_sampler_for_biased,

title="Classifier performances with biased training dataset",

dataset_type="biased" # For loading correct pre-trained networks

)

Interestingly, the SimCLR network encoder is not only the only network to perform well, it even outperforms its control performance (which uses the same test dataset), at least with this particular dataset and biasing.

Note on performance improvement: This improvement for the SimCLR encoder is reflected in the pre-training loss curves (not shown here), which show that the encoder trained with the biased dataset learns faster than the encoder trained with the unbiased training set. It is possible that the dataset biasing, by reducing the variability in the dataset, makes the contrastive task easier, thus enabling the network to learn a good feature space for the classification task in fewer epochs

Discussion 7.1.1: How do different models cope with a biased training dataset?#

A. Which models are most and least affected by the biased training dataset? B. Which types of images in the test set are most likely causing the observed drop in performance? C. Why are certain models more robust to the bias introduced here than others? D. What are some methods we can employ to help mitigate the negative effects of biases in our training sets on our ability to learn good data representations with our models?

Submit your feedback#