Tutorial 1: PyTorch#

Week 1, Day 1: Basics and PyTorch

By Neuromatch Academy

Content creators: Shubh Pachchigar, Vladimir Haltakov, Matthew Sargent, Konrad Kording

Content reviewers: Deepak Raya, Siwei Bai, Kelson Shilling-Scrivo

Content editors: Anoop Kulkarni, Spiros Chavlis

Production editors: Arush Tagade, Spiros Chavlis

Tutorial Objectives#

Then have a few specific objectives for this tutorial:

Learn about PyTorch and tensors

Tensor Manipulations

Data Loading

GPUs and CUDA Tensors

Train NaiveNet

Get to know your pod

Start thinking about the course as a whole

Setup#

Throughout your Neuromatch tutorials, most (probably all!) notebooks contain setup cells. These cells will import the required Python packages (e.g., PyTorch, NumPy); set global or environment variables, and load in helper functions for things like plotting. In some tutorials, you will notice that we install some dependencies even if they are preinstalled on Google Colab or Kaggle. This happens because we have added automation to our repository through GitHub Actions.

Be sure to run all of the cells in the setup section. Feel free to expand them and have a look at what you are loading in, but you should be able to fulfill the learning objectives of every tutorial without having to look at these cells.

If you start building your own projects built on this code base we highly recommend looking at them in more detail.

Install dependencies#

Show code cell source

# @title Install dependencies

!pip install pandas --quiet

Install and import feedback gadget#

Show code cell source

# @title Install and import feedback gadget

!pip3 install vibecheck datatops --quiet

from vibecheck import DatatopsContentReviewContainer

def content_review(notebook_section: str):

return DatatopsContentReviewContainer(

"", # No text prompt

notebook_section,

{

"url": "https://pmyvdlilci.execute-api.us-east-1.amazonaws.com/klab",

"name": "neuromatch_dl",

"user_key": "f379rz8y",

},

).render()

feedback_prefix = "W1D1_T1"

# Imports

import time

import random

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

# PyTorch libraries

import torch

from torch import nn

from torchvision import datasets

from torch.utils.data import DataLoader

from torchvision.transforms import ToTensor

Figure Settings#

Show code cell source

# @title Figure Settings

import logging

logging.getLogger('matplotlib.font_manager').disabled = True

import ipywidgets as widgets

%config InlineBackend.figure_format = 'retina'

plt.style.use("https://raw.githubusercontent.com/NeuromatchAcademy/content-creation/main/nma.mplstyle")

Helper Functions#

Show code cell source

# @title Helper Functions

def checkExercise1(A, B, C, D):

"""

Helper function for checking Exercise 1.

Args:

A: torch.Tensor

Torch Tensor of shape (20, 21) consisting of ones.

B: torch.Tensor

Torch Tensor of size([3,4])

C: torch.Tensor

Torch Tensor of size([20,21])

D: torch.Tensor

Torch Tensor of size([19])

Returns:

Nothing.

"""

assert torch.equal(A.to(int),torch.ones(20, 21).to(int)), "Got: {A} \n Expected: {torch.ones(20, 21)} (shape: {torch.ones(20, 21).shape})"

assert np.array_equal(B.numpy(),np.vander([1, 2, 3], 4)), "Got: {B} \n Expected: {np.vander([1, 2, 3], 4)} (shape: {np.vander([1, 2, 3], 4).shape})"

assert C.shape == (20, 21), "Got: {C} \n Expected (shape: {(20, 21)})"

assert torch.equal(D, torch.arange(4, 41, step=2)), "Got {D} \n Expected: {torch.arange(4, 41, step=2)} (shape: {torch.arange(4, 41, step=2).shape})"

print("All correct")

def timeFun(f, dim, iterations, device='cpu'):

"""

Helper function to calculate amount of time taken per instance on CPU/GPU

Args:

f: BufferedReader IO instance

Function name for which to calculate computational time complexity

dim: Integer

Number of dimensions in instance in question

iterations: Integer

Number of iterations for instance in question

device: String

Device on which respective computation is to be run

Returns:

Nothing

"""

iterations = iterations

t_total = 0

for _ in range(iterations):

start = time.time()

f(dim, device)

end = time.time()

t_total += end - start

if device == 'cpu':

print(f"time taken for {iterations} iterations of {f.__name__}({dim}, {device}): {t_total:.5f}")

else:

print(f"time taken for {iterations} iterations of {f.__name__}({dim}, {device}): {t_total:.5f}")

Important note: Colab users

Scratch Code Cells

If you want to quickly try out something or take a look at the data, you can use scratch code cells. They allow you to run Python code, but will not mess up the structure of your notebook.

To open a new scratch cell go to Insert → Scratch code cell.

Section 1: Welcome to Neuromatch Deep learning course#

Time estimate: ~25mins

Video 1: Welcome and History#

This will be an intensive 3 week adventure. We will all learn Deep Learning (DL) in a group. Groups need standards. Read our Code of Conduct.

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Welcome_and_History_Video")

Video 2: Why DL is cool#

Discuss with your pod: What do you hope to get out of this course? [in about 100 words]

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Why_DL_is_cool_Video")

Section 2: The Basics of PyTorch#

Time estimate: ~2 hours 05 mins

PyTorch is a Python-based scientific computing package targeted at two sets of audiences:

A replacement for NumPy optimized for the power of GPUs

A deep learning platform that provides significant flexibility and speed

At its core, PyTorch provides a few key features:

A multidimensional Tensor object, similar to NumPy Array but with GPU acceleration.

An optimized autograd engine for automatically computing derivatives.

A clean, modular API for building and deploying deep learning models.

You can find more information about PyTorch in the Appendix.

Section 2.1: Creating Tensors#

Video 3: Making Tensors#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Making_Tensors_Video")

There are various ways of creating tensors, and when doing any real deep learning project, we will usually have to do so.

Construct tensors directly:

# We can construct a tensor directly from some common python iterables,

# such as list and tuple nested iterables can also be handled as long as the

# dimensions are compatible

# tensor from a list

a = torch.tensor([0, 1, 2])

#tensor from a tuple of tuples

b = ((1.0, 1.1), (1.2, 1.3))

b = torch.tensor(b)

# tensor from a numpy array

c = np.ones([2, 3])

c = torch.tensor(c)

print(f"Tensor a: {a}")

print(f"Tensor b: {b}")

print(f"Tensor c: {c}")

Tensor a: tensor([0, 1, 2])

Tensor b: tensor([[1.0000, 1.1000],

[1.2000, 1.3000]])

Tensor c: tensor([[1., 1., 1.],

[1., 1., 1.]], dtype=torch.float64)

Some common tensor constructors:

# The numerical arguments we pass to these constructors

# determine the shape of the output tensor

x = torch.ones(5, 3)

y = torch.zeros(2)

z = torch.empty(1, 1, 5)

print(f"Tensor x: {x}")

print(f"Tensor y: {y}")

print(f"Tensor z: {z}")

Tensor x: tensor([[1., 1., 1.],

[1., 1., 1.],

[1., 1., 1.],

[1., 1., 1.],

[1., 1., 1.]])

Tensor y: tensor([0., 0.])

Tensor z: tensor([[[4.8440e-35, 3.0802e-41, 0.0000e+00, 0.0000e+00, 3.7699e+16]]])

Notice that .empty() does not return zeros, but seemingly random numbers. Unlike .zeros(), which initialises the elements of the tensor with zeros, .empty() just allocates the memory. It is hence a bit faster if you are looking to just create a tensor.

Creating random tensors and tensors like other tensors:

# There are also constructors for random numbers

# Uniform distribution

a = torch.rand(1, 3)

# Normal distribution

b = torch.randn(3, 4)

# There are also constructors that allow us to construct

# a tensor according to the above constructors, but with

# dimensions equal to another tensor.

c = torch.zeros_like(a)

d = torch.rand_like(c)

print(f"Tensor a: {a}")

print(f"Tensor b: {b}")

print(f"Tensor c: {c}")

print(f"Tensor d: {d}")

Tensor a: tensor([[0.3615, 0.9025, 0.3785]])

Tensor b: tensor([[ 0.7399, -1.6981, -0.0053, -0.2629],

[-1.4162, -1.1854, 0.1079, 0.3295],

[-0.8142, 1.8536, -1.4424, 1.4957]])

Tensor c: tensor([[0., 0., 0.]])

Tensor d: tensor([[0.1734, 0.5563, 0.8040]])

Reproducibility:

PyTorch Random Number Generator (RNG): You can use

torch.manual_seed()to seed the RNG for all devices (both CPU and GPU):

import torch

torch.manual_seed(0)

For custom operators, you might need to set python seed as well:

import random

random.seed(0)

Random number generators in other libraries (e.g., NumPy):

import numpy as np

np.random.seed(0)

Here, we define for you a function called set_seed that does the job for you!

def set_seed(seed=None, seed_torch=True):

"""

Function that controls randomness. NumPy and random modules must be imported.

Args:

seed : Integer

A non-negative integer that defines the random state. Default is `None`.

seed_torch : Boolean

If `True` sets the random seed for pytorch tensors, so pytorch module

must be imported. Default is `True`.

Returns:

Nothing.

"""

if seed is None:

seed = np.random.choice(2 ** 32)

random.seed(seed)

np.random.seed(seed)

if seed_torch:

torch.manual_seed(seed)

torch.cuda.manual_seed_all(seed)

torch.cuda.manual_seed(seed)

torch.backends.cudnn.benchmark = False

torch.backends.cudnn.deterministic = True

print(f'Random seed {seed} has been set.')

Now, let’s use the set_seed function in the previous example. Execute the cell multiple times to verify that the numbers printed are always the same.

def simplefun(seed=True, my_seed=None):

"""

Helper function to verify effectiveness of set_seed attribute

Args:

seed: Boolean

Specifies if seed value is provided or not

my_seed: Integer

Initializes seed to specified value

Returns:

Nothing

"""

if seed:

set_seed(seed=my_seed)

# uniform distribution

a = torch.rand(1, 3)

# normal distribution

b = torch.randn(3, 4)

print("Tensor a: ", a)

print("Tensor b: ", b)

simplefun(seed=True, my_seed=0) # Turn `seed` to `False` or change `my_seed`

Random seed 0 has been set.

Tensor a: tensor([[0.4963, 0.7682, 0.0885]])

Tensor b: tensor([[ 0.3643, 0.1344, 0.1642, 0.3058],

[ 0.2100, 0.9056, 0.6035, 0.8110],

[-0.0451, 0.8797, 1.0482, -0.0445]])

Numpy-like number ranges:#

The .arange() and .linspace() behave how you would expect them to if you are familar with numpy.

a = torch.arange(0, 10, step=1)

b = np.arange(0, 10, step=1)

c = torch.linspace(0, 5, steps=11)

d = np.linspace(0, 5, num=11)

print(f"Tensor a: {a}\n")

print(f"Numpy array b: {b}\n")

print(f"Tensor c: {c}\n")

print(f"Numpy array d: {d}\n")

Tensor a: tensor([0, 1, 2, 3, 4, 5, 6, 7, 8, 9])

Numpy array b: [0 1 2 3 4 5 6 7 8 9]

Tensor c: tensor([0.0000, 0.5000, 1.0000, 1.5000, 2.0000, 2.5000, 3.0000, 3.5000, 4.0000,

4.5000, 5.0000])

Numpy array d: [0. 0.5 1. 1.5 2. 2.5 3. 3.5 4. 4.5 5. ]

Coding Exercise 2.1: Creating Tensors#

Below you will find some incomplete code. Fill in the missing code to construct the specified tensors.

We want the tensors:

\(A:\) 20 by 21 tensor consisting of ones

\(B:\) a tensor with elements equal to the elements of numpy array \(Z\)

\(C:\) a tensor with the same number of elements as \(A\) but with values \( \sim \mathcal{U}(0,1)^\dagger\)

\(D:\) a 1D tensor containing the even numbers between 4 and 40 inclusive.

\(^\dagger\): \(\mathcal{U(\alpha, \beta)}\) denotes the uniform distribution from \(\alpha\) to \(\beta\), with \(\alpha, \beta \in \mathbb{R}\).

def tensor_creation(Z):

"""

A function that creates various tensors.

Args:

Z: numpy.ndarray

An array of shape (3,4)

Returns:

A : Tensor

20 by 21 tensor consisting of ones

B : Tensor

A tensor with elements equal to the elements of numpy array Z

C : Tensor

A tensor with the same number of elements as A but with values ∼U(0,1)

D : Tensor

A 1D tensor containing the even numbers between 4 and 40 inclusive.

"""

#################################################

## TODO for students: fill in the missing code

## from the first expression

raise NotImplementedError("Student exercise: say what they should have done")

#################################################

A = ...

B = ...

C = ...

D = ...

return A, B, C, D

# numpy array to copy later

Z = np.vander([1, 2, 3], 4)

# Uncomment below to check your function!

# A, B, C, D = tensor_creation(Z)

# checkExercise1(A, B, C, D)

All correct!

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Creating_Tensors_Exercise")

Section 2.2: Operations in PyTorch#

Tensor-Tensor operations

We can perform operations on tensors using methods under torch.

Video 4: Tensor Operators#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Tensors_Operators_Video")

Tensor-Tensor operations

We can perform operations on tensors using methods under torch..

a = torch.ones(5, 3)

b = torch.rand(5, 3)

c = torch.empty(5, 3)

d = torch.empty(5, 3)

# this only works if c and d already exist

torch.add(a, b, out=c)

# Pointwise Multiplication of a and b

torch.multiply(a, b, out=d)

print(c)

print(d)

tensor([[1.0362, 1.1852, 1.3734],

[1.3051, 1.9320, 1.1759],

[1.2698, 1.1507, 1.0317],

[1.2081, 1.9298, 1.7231],

[1.7423, 1.5263, 1.2437]])

tensor([[0.0362, 0.1852, 0.3734],

[0.3051, 0.9320, 0.1759],

[0.2698, 0.1507, 0.0317],

[0.2081, 0.9298, 0.7231],

[0.7423, 0.5263, 0.2437]])

However, in PyTorch, most common Python operators are overridden. The common standard arithmetic operators (\(+\), \(-\), \(*\), \(/\), and \(**\)) have all been lifted to elementwise operations

x = torch.tensor([1, 2, 4, 8])

y = torch.tensor([1, 2, 3, 4])

x + y, x - y, x * y, x / y, x**y # The `**` is the exponentiation operator

(tensor([ 2, 4, 7, 12]),

tensor([0, 0, 1, 4]),

tensor([ 1, 4, 12, 32]),

tensor([1.0000, 1.0000, 1.3333, 2.0000]),

tensor([ 1, 4, 64, 4096]))

Tensor Methods

Tensors also have a number of common arithmetic operations built in. A full list of all methods can be found in the appendix (there are a lot!)

All of these operations should have similar syntax to their numpy equivalents (feel free to skip if you already know this!).

x = torch.rand(3, 3)

print(x)

print("\n")

# sum() - note the axis is the axis you move across when summing

print(f"Sum of every element of x: {x.sum()}")

print(f"Sum of the columns of x: {x.sum(axis=0)}")

print(f"Sum of the rows of x: {x.sum(axis=1)}")

print("\n")

print(f"Mean value of all elements of x {x.mean()}")

print(f"Mean values of the columns of x {x.mean(axis=0)}")

print(f"Mean values of the rows of x {x.mean(axis=1)}")

tensor([[0.5846, 0.0332, 0.1387],

[0.2422, 0.8155, 0.7932],

[0.2783, 0.4820, 0.8198]])

Sum of every element of x: 4.187318325042725

Sum of the columns of x: tensor([1.1051, 1.3306, 1.7517])

Sum of the rows of x: tensor([0.7565, 1.8509, 1.5800])

Mean value of all elements of x 0.46525758504867554

Mean values of the columns of x tensor([0.3684, 0.4435, 0.5839])

Mean values of the rows of x tensor([0.2522, 0.6170, 0.5267])

Matrix Operations

The @ symbol is overridden to represent matrix multiplication. You can also use torch.matmul() to multiply tensors. For dot multiplication, you can use torch.dot(), or manipulate the axes of your tensors and do matrix multiplication (we will cover that in the next section).

Transposes of 2D tensors are obtained using torch.t() or Tensor.T. Note the lack of brackets for Tensor.T - it is an attribute, not a method.

Coding Exercise 2.2 : Simple tensor operations#

Below are two expressions involving operations on matrices.

and

The code block below that computes these expressions using PyTorch is incomplete - fill in the missing lines.

def simple_operations(a1: torch.Tensor, a2: torch.Tensor, a3: torch.Tensor):

"""

Helper function to demonstrate simple operations

i.e., Multiplication of tensor a1 with tensor a2 and then add it with tensor a3

Args:

a1: Torch tensor

Tensor of size ([2,2])

a2: Torch tensor

Tensor of size ([2,2])

a3: Torch tensor

Tensor of size ([2,2])

Returns:

answer: Torch tensor

Tensor of size ([2,2]) resulting from a1 multiplied with a2, added with a3

"""

################################################

## TODO for students: complete the first computation using the argument matricies

raise NotImplementedError("Student exercise: fill in the missing code to complete the operation")

################################################

#

answer = ...

return answer

# Computing expression 1:

# init our tensors

a1 = torch.tensor([[2, 4], [5, 7]])

a2 = torch.tensor([[1, 1], [2, 3]])

a3 = torch.tensor([[10, 10], [12, 1]])

## uncomment to test your function

# A = simple_operations(a1, a2, a3)

# print(A)

tensor([[20, 24],

[31, 27]])

def dot_product(b1: torch.Tensor, b2: torch.Tensor):

###############################################

## TODO for students: complete the first computation using the argument matricies

raise NotImplementedError("Student exercise: fill in the missing code to complete the operation")

###############################################

"""

Helper function to demonstrate dot product operation

Dot product is an algebraic operation that takes two equal-length sequences

(usually coordinate vectors), and returns a single number.

Geometrically, it is the product of the Euclidean magnitudes of the

two vectors and the cosine of the angle between them.

Args:

b1: Torch tensor

Tensor of size ([3])

b2: Torch tensor

Tensor of size ([3])

Returns:

product: Tensor

Tensor of size ([1]) resulting from b1 scalar multiplied with b2

"""

# Use torch.dot() to compute the dot product of two tensors

product = ...

return product

# Computing expression 2:

b1 = torch.tensor([3, 5, 7])

b2 = torch.tensor([2, 4, 8])

## Uncomment to test your function

# b = dot_product(b1, b2)

# print(b)

tensor(82)

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Simple_Tensor_Operations_Exercise")

Section 2.3 Manipulating Tensors in Pytorch#

Video 5: Tensor Indexing#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Manipulating_Tensors_Video")

Indexing

Just as in numpy, elements in a tensor can be accessed by index. As in any numpy array, the first element has index 0 and ranges are specified to include the first to last_element-1. We can access elements according to their relative position to the end of the list by using negative indices. Indexing is also referred to as slicing.

For example, [-1] selects the last element; [1:3] selects the second and the third elements, and [:-2] will select all elements excluding the last and second-to-last elements.

x = torch.arange(0, 10)

print(x)

print(x[-1])

print(x[1:3])

print(x[:-2])

tensor([0, 1, 2, 3, 4, 5, 6, 7, 8, 9])

tensor(9)

tensor([1, 2])

tensor([0, 1, 2, 3, 4, 5, 6, 7])

When we have multidimensional tensors, indexing rules work the same way as NumPy.

# make a 5D tensor

x = torch.rand(1, 2, 3, 4, 5)

print(f" shape of x[0]:{x[0].shape}")

print(f" shape of x[0][0]:{x[0][0].shape}")

print(f" shape of x[0][0][0]:{x[0][0][0].shape}")

shape of x[0]:torch.Size([2, 3, 4, 5])

shape of x[0][0]:torch.Size([3, 4, 5])

shape of x[0][0][0]:torch.Size([4, 5])

Flatten and reshape

There are various methods for reshaping tensors. It is common to have to express 2D data in 1D format. Similarly, it is also common to have to reshape a 1D tensor into a 2D tensor. We can achieve this with the .flatten() and .reshape() methods.

z = torch.arange(12).reshape(6, 2)

print(f"Original z: \n {z}")

# 2D -> 1D

z = z.flatten()

print(f"Flattened z: \n {z}")

# and back to 2D

z = z.reshape(3, 4)

print(f"Reshaped (3x4) z: \n {z}")

Original z:

tensor([[ 0, 1],

[ 2, 3],

[ 4, 5],

[ 6, 7],

[ 8, 9],

[10, 11]])

Flattened z:

tensor([ 0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11])

Reshaped (3x4) z:

tensor([[ 0, 1, 2, 3],

[ 4, 5, 6, 7],

[ 8, 9, 10, 11]])

You will also see the .view() methods used a lot to reshape tensors. There is a subtle difference between .view() and .reshape(), though for now we will just use .reshape(). The documentation can be found in the Appendix.

Squeezing tensors

When processing batches of data, you will quite often be left with singleton dimensions. E.g., [1,10] or [256, 1, 3]. This dimension can quite easily mess up your matrix operations if you don’t plan on it being there…

In order to compress tensors along their singleton dimensions we can use the .squeeze() method. We can use the .unsqueeze() method to do the opposite.

x = torch.randn(1, 10)

# printing the zeroth element of the tensor will not give us the first number!

print(x.shape)

print(f"x[0]: {x[0]}")

torch.Size([1, 10])

x[0]: tensor([-0.7391, 0.8027, -0.6817, -0.1335, 0.0658, -0.5919, 0.7670, 0.6899,

0.3282, 0.5085])

Because of that pesky singleton dimension, x[0] gave us the first row instead!

# Let's get rid of that singleton dimension and see what happens now

x = x.squeeze(0)

print(x.shape)

print(f"x[0]: {x[0]}")

torch.Size([10])

x[0]: -0.7390837073326111

# Adding singleton dimensions works a similar way, and is often used when tensors

# being added need same number of dimensions

y = torch.randn(5, 5)

print(f"Shape of y: {y.shape}")

# lets insert a singleton dimension

y = y.unsqueeze(1)

print(f"Shape of y: {y.shape}")

Shape of y: torch.Size([5, 5])

Shape of y: torch.Size([5, 1, 5])

Permutation

Sometimes our dimensions will be in the wrong order! For example, we may be dealing with RGB images with dim \([3\times48\times64]\), but our pipeline expects the colour dimension to be the last dimension, i.e., \([48\times64\times3]\). To get around this we can use the .permute() method.

# `x` has dimensions [color,image_height,image_width]

x = torch.rand(3, 48, 64)

# We want to permute our tensor to be [ image_height , image_width , color ]

x = x.permute(1, 2, 0)

# permute(1,2,0) means:

# The 0th dim of my new tensor = the 1st dim of my old tensor

# The 1st dim of my new tensor = the 2nd

# The 2nd dim of my new tensor = the 0th

print(x.shape)

torch.Size([48, 64, 3])

You may also see .transpose() used. This works in a similar way as permute, but can only swap two dimensions at once.

Concatenation

In this example, we concatenate two matrices along rows (axis 0, the first element of the shape) vs. columns (axis 1, the second element of the shape). We can see that the first output tensor’s axis-0 length (6) is the sum of the two input tensors’ axis-0 lengths (3+3); while the second output tensor’s axis-1 length (8) is the sum of the two input tensors’ axis-1 lengths (4+4).

# Create two tensors of the same shape

x = torch.arange(12, dtype=torch.float32).reshape((3, 4))

y = torch.tensor([[2.0, 1, 4, 3], [1, 2, 3, 4], [4, 3, 2, 1]])

# Concatenate along rows

cat_rows = torch.cat((x, y), dim=0)

# Concatenate along columns

cat_cols = torch.cat((x, y), dim=1)

# Printing outputs

print('Concatenated by rows: shape{} \n {}'.format(list(cat_rows.shape), cat_rows))

print('\n Concatenated by colums: shape{} \n {}'.format(list(cat_cols.shape), cat_cols))

Concatenated by rows: shape[6, 4]

tensor([[ 0., 1., 2., 3.],

[ 4., 5., 6., 7.],

[ 8., 9., 10., 11.],

[ 2., 1., 4., 3.],

[ 1., 2., 3., 4.],

[ 4., 3., 2., 1.]])

Concatenated by colums: shape[3, 8]

tensor([[ 0., 1., 2., 3., 2., 1., 4., 3.],

[ 4., 5., 6., 7., 1., 2., 3., 4.],

[ 8., 9., 10., 11., 4., 3., 2., 1.]])

Conversion to Other Python Objects

Converting a tensor to a numpy.ndarray, or vice versa, is easy, and the converted result does not share memory. This minor inconvenience is quite important: when you perform operations on the CPU or GPUs, you do not want to halt computation, waiting to see whether the NumPy package of Python might want to be doing something else with the same chunk of memory.

When converting to a NumPy array, the information being tracked by the tensor will be lost, i.e., the computational graph. This will be covered in detail when you are introduced to autograd tomorrow!

x = torch.randn(5)

print(f"x: {x} | x type: {x.type()}")

y = x.numpy()

print(f"y: {y} | y type: {type(y)}")

z = torch.tensor(y)

print(f"z: {z} | z type: {z.type()}")

x: tensor([ 0.2659, -0.5148, -0.0613, 0.5046, 0.1385]) | x type: torch.FloatTensor

y: [ 0.26593232 -0.5148316 -0.06128114 0.5046449 0.13848118] | y type: <class 'numpy.ndarray'>

z: tensor([ 0.2659, -0.5148, -0.0613, 0.5046, 0.1385]) | z type: torch.FloatTensor

To convert a size-1 tensor to a Python scalar, we can invoke the item function or Python’s built-in functions.

a = torch.tensor([3.5])

a, a.item(), float(a), int(a)

(tensor([3.5000]), 3.5, 3.5, 3)

Coding Exercise 2.3: Manipulating Tensors#

Using a combination of the methods discussed above, complete the functions below.

Function A

This function takes in two 2D tensors \(A\) and \(B\) and returns the column sum of A multiplied by the sum of all the elmements of \(B\), i.e., a scalar, e.g.,

Function B

This function takes in a square matrix \(C\) and returns a 2D tensor consisting of a flattened \(C\) with the index of each element appended to this tensor in the row dimension, e.g.,

Hint: Pay close attention to singleton dimensions.

Function C

This function takes in two 2D tensors \(D\) and \(E\). If the dimensions allow it, this function returns the elementwise sum of \(D\)-shaped \(E\), and \(D\); else this function returns a 1D tensor that is the concatenation of the two tensors, e.g.,

Hint: torch.numel() is an easy way of finding the number of elements in a tensor.

def functionA(my_tensor1, my_tensor2):

"""

This function takes in two 2D tensors `my_tensor1` and `my_tensor2`

and returns the column sum of

`my_tensor1` multiplied by the sum of all the elmements of `my_tensor2`,

i.e., a scalar.

Args:

my_tensor1: torch.Tensor

my_tensor2: torch.Tensor

Retuns:

output: torch.Tensor

The multiplication of the column sum of `my_tensor1` by the sum of

`my_tensor2`.

"""

################################################

## TODO for students: complete functionA

raise NotImplementedError("Student exercise: complete function A")

################################################

# TODO multiplication the sum of the tensors

output = ...

return output

def functionB(my_tensor):

"""

This function takes in a square matrix `my_tensor` and returns a 2D tensor

consisting of a flattened `my_tensor` with the index of each element

appended to this tensor in the row dimension.

Args:

my_tensor: torch.Tensor

Returns:

output: torch.Tensor

Concatenated tensor.

"""

################################################

## TODO for students: complete functionB

raise NotImplementedError("Student exercise: complete function B")

################################################

# TODO flatten the tensor `my_tensor`

my_tensor = ...

# TODO create the idx tensor to be concatenated to `my_tensor`

idx_tensor = ...

# TODO concatenate the two tensors

output = ...

return output

def functionC(my_tensor1, my_tensor2):

"""

This function takes in two 2D tensors `my_tensor1` and `my_tensor2`.

If the dimensions allow it, it returns the

elementwise sum of `my_tensor1`-shaped `my_tensor2`, and `my_tensor2`;

else this function returns a 1D tensor that is the concatenation of the

two tensors.

Args:

my_tensor1: torch.Tensor

my_tensor2: torch.Tensor

Returns:

output: torch.Tensor

Concatenated tensor.

"""

################################################

## TODO for students: complete functionB

raise NotImplementedError("Student exercise: complete function C")

################################################

# TODO check we can reshape `my_tensor2` into the shape of `my_tensor1`

if ...:

# TODO reshape `my_tensor2` into the shape of `my_tensor1`

my_tensor2 = ...

# TODO sum the two tensors

output = ...

else:

# TODO flatten both tensors

my_tensor1 = ...

my_tensor2 = ...

# TODO concatenate the two tensors in the correct dimension

output = ...

return output

## Implement the functions above and then uncomment the following lines to test your code

# print(functionA(torch.tensor([[1, 1], [1, 1]]), torch.tensor([[1, 2, 3], [1, 2, 3]])))

# print(functionB(torch.tensor([[2, 3], [-1, 10]])))

# print(functionC(torch.tensor([[1, -1], [-1, 3]]), torch.tensor([[2, 3, 0, 2]])))

# print(functionC(torch.tensor([[1, -1], [-1, 3]]), torch.tensor([[2, 3, 0]])))

tensor([24, 24])

tensor([[ 0, 2],

[ 1, 3],

[ 2, -1],

[ 3, 10]])

tensor([[ 3, 2],

[-1, 5]])

tensor([ 1, -1, -1, 3, 2, 3, 0])

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Manipulating_Tensors_Exercise")

Section 2.4: GPUs#

Video 6: GPU vs CPU#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_GPU_vs_CPU_Video")

By default, when we create a tensor it will not live on the GPU!

x = torch.randn(10)

print(x.device)

cpu

When using Colab notebooks, by default, will not have access to a GPU. In order to start using GPUs we need to request one. We can do this by going to the runtime tab at the top of the page.

By following Runtime → Change runtime type and selecting GPU from the Hardware Accelerator dropdown list, we can start playing with sending tensors to GPUs.

Once you have done this your runtime will restart and you will need to rerun the first setup cell to reimport PyTorch. Then proceed to the next cell.

For more information on the GPU usage policy you can view in the Appendix.

Now we have a GPU.

The cell below should return True.

print(torch.cuda.is_available())

False

CUDA is an API developed by Nvidia for interfacing with GPUs. PyTorch provides us with a layer of abstraction, and allows us to launch CUDA kernels using pure Python.

In short, we get the power of parallelizing our tensor computations on GPUs, whilst only writing (relatively) simple Python!

Here, we define the function set_device, which returns the device use in the notebook, i.e., cpu or cuda. Unless otherwise specified, we use this function on top of every tutorial, and we store the device variable such as

DEVICE = set_device()

Let’s define the function using the PyTorch package torch.cuda, which is lazily initialized, so we can always import it, and use is_available() to determine if our system supports CUDA.

def set_device():

"""

Set the device. CUDA if available, CPU otherwise

Args:

None

Returns:

Nothing

"""

device = "cuda" if torch.cuda.is_available() else "cpu"

if device != "cuda":

print("GPU is not enabled in this notebook. \n"

"If you want to enable it, in the menu under `Runtime` -> \n"

"`Hardware accelerator.` and select `GPU` from the dropdown menu")

else:

print("GPU is enabled in this notebook. \n"

"If you want to disable it, in the menu under `Runtime` -> \n"

"`Hardware accelerator.` and select `None` from the dropdown menu")

return device

Let’s make some CUDA tensors!

# common device agnostic way of writing code that can run on cpu OR gpu

# that we provide for you in each of the tutorials

DEVICE = set_device()

# we can specify a device when we first create our tensor

x = torch.randn(2, 2, device=DEVICE)

print(x.dtype)

print(x.device)

# we can also use the .to() method to change the device a tensor lives on

y = torch.randn(2, 2)

print(f"y before calling to() | device: {y.device} | dtype: {y.type()}")

y = y.to(DEVICE)

print(f"y after calling to() | device: {y.device} | dtype: {y.type()}")

GPU is not enabled in this notebook.

If you want to enable it, in the menu under `Runtime` ->

`Hardware accelerator.` and select `GPU` from the dropdown menu

torch.float32

cpu

y before calling to() | device: cpu | dtype: torch.FloatTensor

y after calling to() | device: cpu | dtype: torch.FloatTensor

Operations between cpu tensors and cuda tensors

Note that the type of the tensor changed after calling .to(). What happens if we try and perform operations on tensors on devices?

x = torch.tensor([0, 1, 2], device=DEVICE)

y = torch.tensor([3, 4, 5], device="cpu")

## Uncomment the following line and run this cell

# z = x + y

We cannot combine CUDA tensors and CPU tensors in this fashion. If we want to compute an operation that combines tensors on different devices, we need to move them first! We can use the .to() method as before, or the .cpu() and .cuda() methods. Note that using the .cuda() will throw an error, if CUDA is not enabled in your machine.

Generally, in this course, all Deep Learning is done on the GPU, and any computation is done on the CPU, so sometimes we have to pass things back and forth, so you’ll see us call.

x = torch.tensor([0, 1, 2], device=DEVICE)

y = torch.tensor([3, 4, 5], device="cpu")

z = torch.tensor([6, 7, 8], device=DEVICE)

# moving to cpu

x = x.to("cpu") # alternatively, you can use x = x.cpu()

print(x + y)

# moving to gpu

y = y.to(DEVICE) # alternatively, you can use y = y.cuda()

print(y + z)

tensor([3, 5, 7])

tensor([ 9, 11, 13])

Coding Exercise 2.4: Just how much faster are GPUs?#

Below is a simple function simpleFun. Complete this function, such that it performs the operations:

Elementwise multiplication

Matrix multiplication

The operations should be able to perfomed on either the CPU or GPU specified by the parameter device. We will use the helper function timeFun(f, dim, iterations, device).

dim = 10000

iterations = 1

def simpleFun(dim, device):

"""

Helper function to check device-compatiblity with computations

Args:

dim: Integer

device: String

"cpu" or "cuda"

Returns:

Nothing.

"""

###############################################

## TODO for students: recreate the function, but

## ensure all computations happens on the `device`

raise NotImplementedError("Student exercise: fill in the missing code to create the tensors")

###############################################

# 2D tensor filled with uniform random numbers in [0,1), dim x dim

x = ...

# 2D tensor filled with uniform random numbers in [0,1), dim x dim

y = ...

# 2D tensor filled with the scalar value 2, dim x dim

z = ...

# elementwise multiplication of x and y

a = ...

# matrix multiplication of x and z

b = ...

del x

del y

del z

del a

del b

## Implement the function above and uncomment the following lines to test your code

# timeFun(f=simpleFun, dim=dim, iterations=iterations)

# timeFun(f=simpleFun, dim=dim, iterations=iterations, device=DEVICE)

Sample output (depends on your hardware)

time taken for 1 iterations of simpleFun(10000, cpu): 23.74070

time taken for 1 iterations of simpleFun(10000, cuda): 0.87535

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_How_Much_Faster_Are_GPUs_Exercise")

Discuss!

Try and reduce the dimensions of the tensors and increase the iterations. You can get to a point where the cpu only function is faster than the GPU function. Why might this be?

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_GPUs_Discussion")

Section 2.5: Datasets and Dataloaders#

Video 7: Getting Data#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Getting_Data_Video")

When training neural network models you will be working with large amounts of data. Fortunately, PyTorch offers some great tools that help you organize and manipulate your data samples.

# Import dataset and dataloaders related packages

from torchvision import datasets

from torchvision.transforms import ToTensor

from torch.utils.data import DataLoader

from torchvision.transforms import Compose, Grayscale

Datasets

The torchvision package gives you easy access to many of the publicly available datasets. Let’s load the CIFAR10 dataset, which contains color images of 10 different classes, like vehicles and animals.

Creating an object of type datasets.CIFAR10 will automatically download and load all images from the dataset. The resulting data structure can be treated as a list containing data samples and their corresponding labels.

# Download and load the images from the CIFAR10 dataset

cifar10_data = datasets.CIFAR10(

root="data", # path where the images will be stored

download=True, # all images should be downloaded

transform=ToTensor() # transform the images to tensors

)

# Print the number of samples in the loaded dataset

print(f"Number of samples: {len(cifar10_data)}")

print(f"Class names: {cifar10_data.classes}")

Downloading https://www.cs.toronto.edu/~kriz/cifar-10-python.tar.gz to data/cifar-10-python.tar.gz

Extracting data/cifar-10-python.tar.gz to data

Number of samples: 50000

Class names: ['airplane', 'automobile', 'bird', 'cat', 'deer', 'dog', 'frog', 'horse', 'ship', 'truck']

We have 50,000 samples loaded. Now, let’s take a look at one of them in detail. Each sample consists of an image and its corresponding label.

# Choose a random sample

random.seed(2021)

image, label = cifar10_data[random.randint(0, len(cifar10_data))]

print(f"Label: {cifar10_data.classes[label]}")

print(f"Image size: {image.shape}")

Label: horse

Image size: torch.Size([3, 32, 32])

Color images are modeled as 3 dimensional tensors. The first dimension corresponds to the channels (\(\text{C}\)) of the image (in this case we have RGB images). The second dimensions is the height (\(\text{H}\)) of the image and the third is the width (\(\text{W}\)). We can denote this image format as \(\text{C} \times \text{H} \times \text{W}\).

Coding Exercise 2.5: Display an image from the dataset#

Let’s try to display the image using matplotlib. The code below will not work, because imshow expects to have the image in a different format, i.e., \(\text{C} \times \text{H} \times \text{W}\).

You need to reorder the dimensions of the tensor using the permute method of the tensor. PyTorch torch.permute(*dims) rearranges the original tensor according to the desired ordering and returns a new multidimensional rotated tensor. The size of the returned tensor remains the same as that of the original.

Code hint:

# create a tensor of size 2 x 4

input_var = torch.randn(2, 4)

# print its size and the tensor

print(input_var.size())

print(input_var)

# dimensions permuted

input_var = input_var.permute(1, 0)

# print its size and the permuted tensor

print(input_var.size())

print(input_var)

# TODO: Uncomment the following line to see the error that arises from the current image format

# plt.imshow(image)

# TODO: Comment the above line and fix this code by reordering the tensor dimensions

# plt.imshow(image.permute(...))

# plt.show()

Example output:

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Display_an_Image_Exercise")

Video 8: Train and Test#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Train_and_Test_Video")

Training and Test Datasets

When loading a dataset, you can specify if you want to load the training or the test samples using the train argument. We can load the training and test datasets separately. For simplicity, today we will not use both datasets separately, but this topic will be adressed in the next days.

# Load the training samples

training_data = datasets.CIFAR10(

root="data",

train=True,

download=True,

transform=ToTensor()

)

# Load the test samples

test_data = datasets.CIFAR10(

root="data",

train=False,

download=True,

transform=ToTensor()

)

Files already downloaded and verified

Files already downloaded and verified

Video 9: Data Augmentation - Transformations#

Dataloader

Another important concept is the Dataloader. It is a wrapper around the Dataset that splits it into minibatches (important for training the neural network) and makes the data iterable. The shuffle argument is used to shuffle the order of the samples across the minibatches.

# Create dataloaders with

train_dataloader = DataLoader(training_data, batch_size=64, shuffle=True)

test_dataloader = DataLoader(test_data, batch_size=64, shuffle=True)

Reproducibility: DataLoader will reseed workers following Randomness in multi-process data loading algorithm. Use worker_init_fn() and a generator to preserve reproducibility:

def seed_worker(worker_id):

worker_seed = torch.initial_seed() % 2**32

numpy.random.seed(worker_seed)

random.seed(worker_seed)

g_seed = torch.Generator()

g_seed.manual_seed(my_seed)

DataLoader(

train_dataset,

batch_size=batch_size,

num_workers=num_workers,

worker_init_fn=seed_worker,

generator=g_seed

)

Important: For the seed_worker to have an effect, num_workers should be 2 or more.

We can now query the next batch from the data loader and inspect it. For this we need to convert the dataloader object to a Python iterator using the function iter and then we can query the next batch using the function next.

We can now see that we have a 4D tensor. This is because we have a 64 images in the batch (\(B\)) and each image has 3 dimensions: channels (\(C\)), height (\(H\)) and width (\(W\)). So, the size of the 4D tensor is \(B \times C \times H \times W\).

# Load the next batch

batch_images, batch_labels = next(iter(train_dataloader))

print('Batch size:', batch_images.shape)

# Display the first image from the batch

plt.imshow(batch_images[0].permute(1, 2, 0))

plt.show()

Batch size: torch.Size([64, 3, 32, 32])

Transformations

Another useful feature when loading a dataset is applying transformations on the data - color conversions, normalization, cropping, rotation etc. There are many predefined transformations in the torchvision.transforms package and you can also combine them using the Compose transform. Checkout the pytorch documentation for details.

Coding Exercise 2.6: Load the CIFAR10 dataset as grayscale images#

The goal of this excercise is to load the images from the CIFAR10 dataset as grayscale images. Note that we rerun the set_seed function to ensure reproducibility.

def my_data_load():

"""

Function to load CIFAR10 data as grayscale images

Args:

None

Returns:

data: DataFrame

CIFAR10 loaded Dataframe of shape (3309, 14)

"""

###############################################

## TODO for students: load the CIFAR10 data,

## but as grayscale images and not as RGB colored.

raise NotImplementedError("Student exercise: fill in the missing code to load the data")

###############################################

## TODO Load the CIFAR10 data using a transform that converts the images to grayscale tensors

data = datasets.CIFAR10(...,

transform=...)

# Display a random grayscale image

image, label = data[random.randint(0, len(data))]

plt.imshow(image.squeeze(), cmap="gray")

plt.show()

return data

set_seed(seed=2021)

## After implementing the above code, uncomment the following lines to test your code

# data = my_data_load()

Random seed 2021 has been set.

Example output:

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Load_CIFAR10_Exercise")

Section 3: Neural Networks#

Time estimate: ~1 hour 30 mins (excluding video)

Now it’s time for you to create your first neural network using PyTorch. This section will walk you through the process of:

Creating a simple neural network model

Training the network

Visualizing the results of the network

Tweaking the network

Video 10: CSV Files#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_CSV_files_Video")

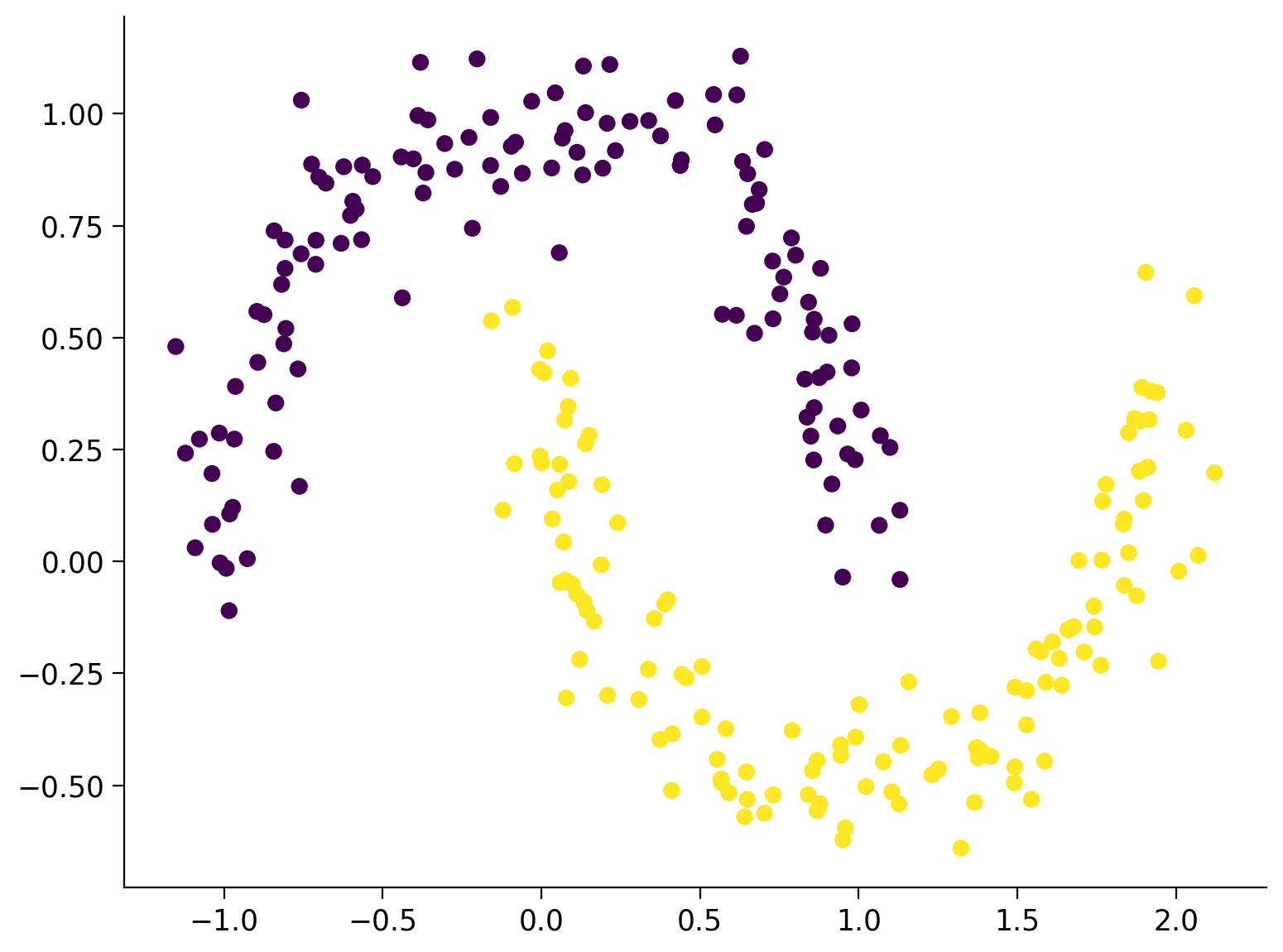

Section 3.1: Data Loading#

First we need some sample data to train our network on. You can use the function below to generate an example dataset consisting of 2D points along two interleaving half circles. The data will be stored in a file called sample_data.csv. You can inspect the file directly in Colab by going to Files on the left side and opening the CSV file.

Generate sample data#

we used scikit-learn module

Show code cell source

# @title Generate sample data

# @markdown we used `scikit-learn` module

from sklearn.datasets import make_moons

# Create a dataset of 256 points with a little noise

X, y = make_moons(256, noise=0.1)

# Store the data as a Pandas data frame and save it to a CSV file

df = pd.DataFrame(dict(x0=X[:,0], x1=X[:,1], y=y))

df.to_csv('sample_data.csv')

Now we can load the data from the CSV file using the Pandas library. Pandas provides many functions for reading files in various formats. When loading data from a CSV file, we can reference the columns directly by their names.

# Load the data from the CSV file in a Pandas DataFrame

data = pd.read_csv("sample_data.csv")

# Create a 2D numpy array from the x0 and x1 columns

X_orig = data[["x0", "x1"]].to_numpy()

# Create a 1D numpy array from the y column

y_orig = data["y"].to_numpy()

# Print the sizes of the generated 2D points X and the corresponding labels Y

print(f"Size X:{X_orig.shape}")

print(f"Size y:{y_orig.shape}")

# Visualize the dataset. The color of the points is determined by the labels `y_orig`.

plt.scatter(X_orig[:, 0], X_orig[:, 1], s=40, c=y_orig)

plt.show()

Size X:(256, 2)

Size y:(256,)

Prepare Data for PyTorch

Now let’s prepare the data in a format suitable for PyTorch - convert everything into tensors.

# Initialize the device variable

DEVICE = set_device()

# Convert the 2D points to a float32 tensor

X = torch.tensor(X_orig, dtype=torch.float32)

# Upload the tensor to the device

X = X.to(DEVICE)

print(f"Size X:{X.shape}")

# Convert the labels to a long interger tensor

y = torch.from_numpy(y_orig).type(torch.LongTensor)

# Upload the tensor to the device

y = y.to(DEVICE)

print(f"Size y:{y.shape}")

GPU is not enabled in this notebook.

If you want to enable it, in the menu under `Runtime` ->

`Hardware accelerator.` and select `GPU` from the dropdown menu

Size X:torch.Size([256, 2])

Size y:torch.Size([256])

Section 3.2: Create a Simple Neural Network#

Video 11: Generating the Neural Network#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Generating_Neural_Network_Video")

For this example we want to have a simple neural network consisting of 3 layers:

1 input layer of size 2 (our points have 2 coordinates)

1 hidden layer of size 16 (you can play with different numbers here)

1 output layer of size 2 (we want the have the scores for the two classes)

During the course you will deal with differend kinds of neural networks. On Day 2, we will focus on linear networks, but you will work with some more complicated architectures in the next days. The example here is meant to demonstrate the process of creating and training a neural network end-to-end.

Programing the Network

PyTorch provides a base class for all neural network modules called nn.Module. You need to inherit from nn.Module and implement some important methods:

__init__In the

__init__method you need to define the structure of your network. Here you will specify what layers will the network consist of, what activation functions will be used etc.forwardAll neural network modules need to implement the

forwardmethod. It specifies the computations the network needs to do when data is passed through it.predictThis is not an obligatory method of a neural network module, but it is a good practice if you want to quickly get the most likely label from the network. It calls the

forwardmethod and chooses the label with the highest score.trainThis is also not an obligatory method, but it is a good practice to have. The method will be used to train the network parameters and will be implemented later in the notebook.

Note: You can use the __call__ method of a module directly and it will invoke the forward method: net() does the same as net.forward().

# Inherit from nn.Module - the base class for neural network modules provided by Pytorch

class NaiveNet(nn.Module):

"""

NaiveNet architecture

Structure is as follows:

Linear Layer (2, 16) -> ReLU activation -> Linear Layer (16, 2)

"""

# Define the structure of your network

def __init__(self):

"""

Defines the NaiveNet structure by initialising following attributes

nn.Linear (2, 16): Transformation from the input to the hidden layer

nn.ReLU: Activation function (ReLU) is a non-linearity which is widely used because it reduces computation.

The function returns 0 if it receives any negative input, but for any positive value x, it returns that value back.

nn.Linear (16, 2): Transformation from the hidden to the output layer

Args:

None

Returns:

Nothing

"""

super(NaiveNet, self).__init__()

# The network is defined as a sequence of operations

self.layers = nn.Sequential(

nn.Linear(2, 16),

nn.ReLU(),

nn.Linear(16, 2),

)

# Specify the computations performed on the data

def forward(self, x):

"""

Defines the forward pass through the above defined structure

Args:

x: torch.Tensor

Input tensor of size ([3])

Returns:

layers: nn.module

Initialised Layers in order to re-use the same layer for each forward pass of data you make.

"""

# Pass the data through the layers

return self.layers(x)

# Choose the most likely label predicted by the network

def predict(self, x):

"""

Performs the prediction task of the network

Args:

x: torch.Tensor

Input tensor of size ([3])

Returns:

Most likely class i.e., Label with the highest score

"""

# Pass the data through the networks

output = self.forward(x)

# Choose the label with the highest score

return torch.argmax(output, 1)

# Train the neural network (will be implemented later)

def train(self, X, y):

"""

Training the Neural Network

Args:

X: torch.Tensor

Input data

y: torch.Tensor

Class Labels/Targets

Returns:

Nothing

"""

pass

Check that your network works

Create an instance of your model and visualize it.

# Create new NaiveNet and transfer it to the device

model = NaiveNet().to(DEVICE)

# Print the structure of the network

print(model)

NaiveNet(

(layers): Sequential(

(0): Linear(in_features=2, out_features=16, bias=True)

(1): ReLU()

(2): Linear(in_features=16, out_features=2, bias=True)

)

)

Coding Exercise 3.2: Classify some samples#

Now, let’s pass some of the points of our dataset through the network and see if it works. You should not expect the network to actually classify the points correctly, because it has not been trained yet.

The goal here is just to get some experience with the data structures that are passed to the forward and predict methods and their results.

## Get the samples

# X_samples = ...

# print("Sample input:\n", X_samples)

## Do a forward pass of the network

# output = ...

# print("\nNetwork output:\n", output)

## Predict the label of each point

# y_predicted = ...

# print("\nPredicted labels:\n", y_predicted)

Sample input:

tensor([[ 0.9066, 0.5052],

[-0.2024, 1.1226],

[ 1.0685, 0.2809],

[ 0.6720, 0.5097],

[ 0.8548, 0.5122]], device='cuda:0')

Network output:

tensor([[ 0.1543, -0.8018],

[ 2.2077, -2.9859],

[-0.5745, -0.0195],

[ 0.1924, -0.8367],

[ 0.1818, -0.8301]], device='cuda:0', grad_fn=<AddmmBackward>)

Predicted labels:

tensor([0, 0, 1, 0, 0], device='cuda:0')

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Classify_some_examples_Exercise")

Section 3.3: Train Your Neural Network#

Video 12: Train the Network#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Train_the_Network_Video")

Now it is time to train your network on your dataset. Don’t worry if you don’t fully understand everything yet - we will cover training in much more details in the next days. For now, the goal is just to see your network in action!

You will usually implement the train method directly when implementing your class NaiveNet. Here, we will implement it as a function outside of the class in order to have it in a separate cell.

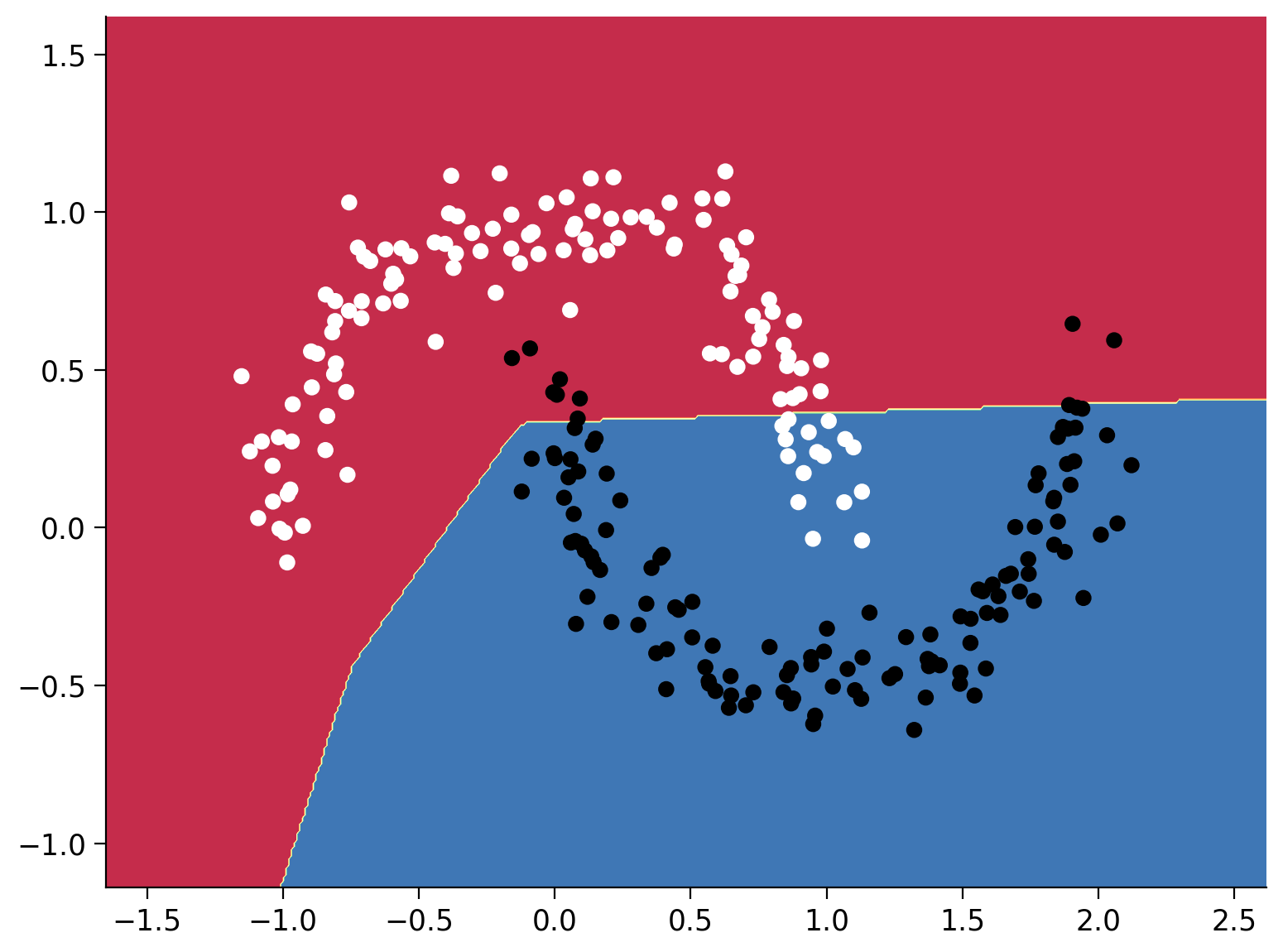

Helper function to plot the decision boundary#

Show code cell source

# @title Helper function to plot the decision boundary

# Code adapted from this notebook: https://jonchar.net/notebooks/Artificial-Neural-Network-with-Keras/

from pathlib import Path

def plot_decision_boundary(model, X, y, device):

"""

Helper function to plot decision boundary

Args:

model: nn.module

NaiveNet instance

X: torch.tensor

Input CIFAR10 data

y: torch.tensor

Class Labels/Targets

device: String

"cpu" or "cuda"

Returns:

Nothing

"""

# Transfer the data to the CPU

X = X.cpu().numpy()

y = y.cpu().numpy()

# Check if the frames folder exists and create it if needed

frames_path = Path("frames")

if not frames_path.exists():

frames_path.mkdir()

# Set min and max values and give it some padding

x_min, x_max = X[:, 0].min() - .5, X[:, 0].max() + .5

y_min, y_max = X[:, 1].min() - .5, X[:, 1].max() + .5

h = 0.01

# Generate a grid of points with distance h between them

xx, yy = np.meshgrid(np.arange(x_min, x_max, h), np.arange(y_min, y_max, h))

# Predict the function value for the whole gid

grid_points = np.c_[xx.ravel(), yy.ravel()]

grid_points = torch.from_numpy(grid_points).type(torch.FloatTensor)

Z = model.predict(grid_points.to(device)).cpu().numpy()

Z = Z.reshape(xx.shape)

# Plot the contour and training examples

plt.contourf(xx, yy, Z, cmap=plt.cm.Spectral)

plt.scatter(X[:, 0], X[:, 1], c=y, cmap=plt.cm.binary)

# Implement the train function given a training dataset X and correcsponding labels y

def train(model, X, y):

"""

Training the Neural Network

Args:

X: torch.Tensor

Input data

y: torch.Tensor

Class Labels/Targets

Returns:

losses: Float

Cross Entropy Loss; Cross-entropy builds upon the idea of entropy

from information theory and calculates the number of bits required

to represent or transmit an average event from one distribution

compared to another distribution.

"""

# The Cross Entropy Loss is suitable for classification problems

loss_function = nn.CrossEntropyLoss()

# Create an optimizer (Stochastic Gradient Descent) that will be used to train the network

learning_rate = 1e-2

optimizer = torch.optim.SGD(model.parameters(), lr=learning_rate)

# Number of epochs

epochs = 15000

# List of losses for visualization

losses = []

for i in range(epochs):

# Pass the data through the network and compute the loss

# We'll use the whole dataset during the training instead of using batches

# in to order to keep the code simple for now.

y_logits = model.forward(X)

loss = loss_function(y_logits, y)

# Clear the previous gradients and compute the new ones

optimizer.zero_grad()

loss.backward()

# Adapt the weights of the network

optimizer.step()

# Store the loss

losses.append(loss.item())

# Print the results at every 1000th epoch

if i % 1000 == 0:

print(f"Epoch {i} loss is {loss.item()}")

plot_decision_boundary(model, X, y, DEVICE)

plt.savefig('frames/{:05d}.png'.format(i))

return losses

# Create a new network instance a train it

model = NaiveNet().to(DEVICE)

losses = train(model, X, y)

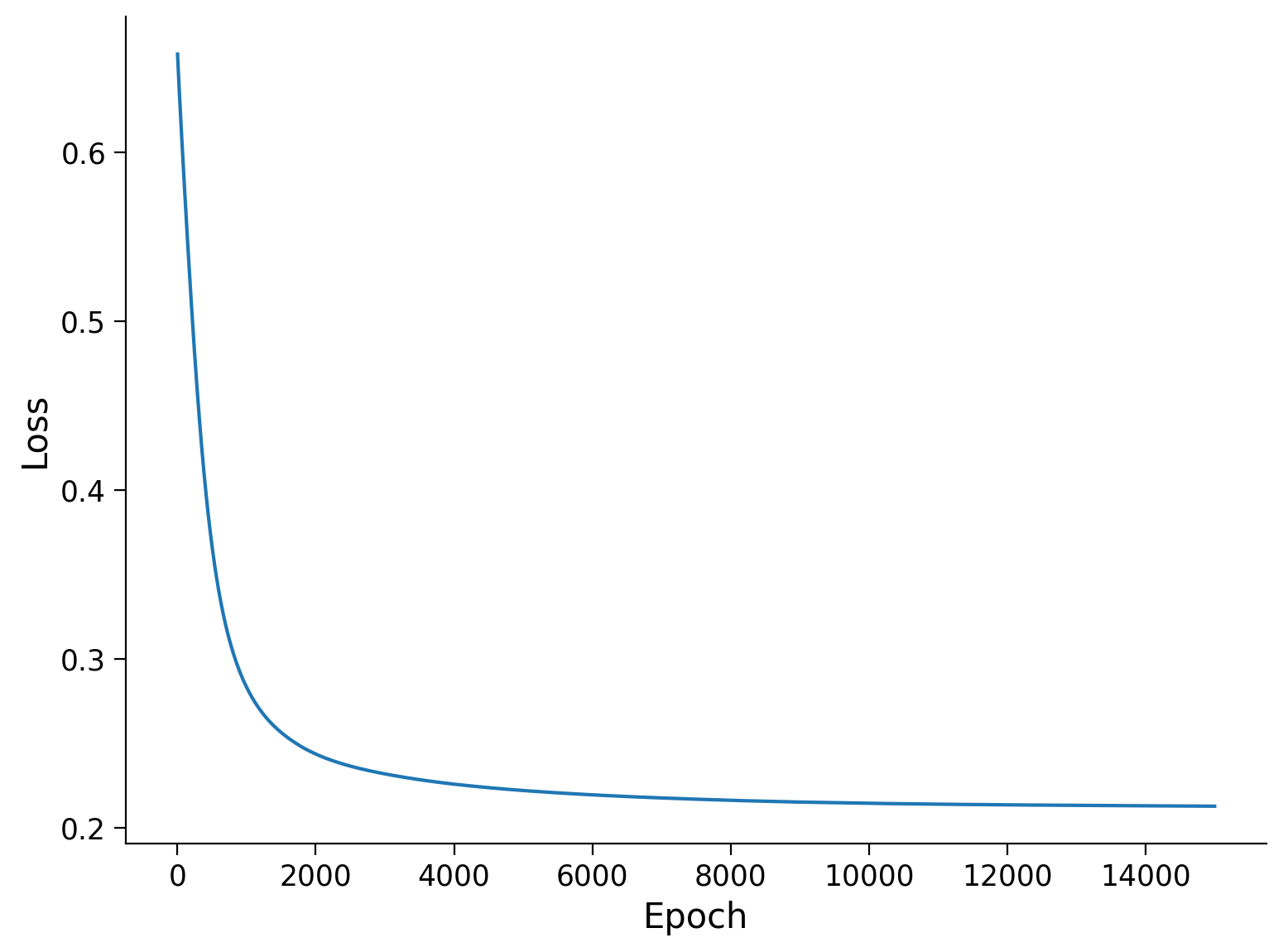

Epoch 0 loss is 0.6582635641098022

Epoch 1000 loss is 0.28303542733192444

Epoch 2000 loss is 0.24354352056980133

Epoch 3000 loss is 0.23178492486476898

Epoch 4000 loss is 0.22571028769016266

Epoch 5000 loss is 0.2219410240650177

Epoch 6000 loss is 0.21937935054302216

Epoch 7000 loss is 0.21753723919391632

Epoch 8000 loss is 0.21614305675029755

Epoch 9000 loss is 0.21508803963661194

Epoch 10000 loss is 0.21437250077724457

Epoch 11000 loss is 0.21384568512439728

Epoch 12000 loss is 0.21345029771327972

Epoch 13000 loss is 0.21314124763011932

Epoch 14000 loss is 0.2128836214542389

Plot the loss during training

Plot the loss during the training to see how it reduces and converges.

plt.plot(np.linspace(1, len(losses), len(losses)), losses)

plt.xlabel("Epoch")

plt.ylabel("Loss")

Text(0, 0.5, 'Loss')

Visualize the training process#

Execute this cell!

Show code cell source

# @title Visualize the training process

# @markdown Execute this cell!

!pip install imageio --quiet

!pip install pathlib --quiet

import imageio.v2 as imageio

from IPython.core.interactiveshell import InteractiveShell

from IPython.display import Image, display

from pathlib import Path

InteractiveShell.ast_node_interactivity = "all"

# Make a list with all images

images = []

for i in range(10):

filename = Path("frames/0"+str(i)+"000.png")

images.append(imageio.imread(filename))

# Save the gif

imageio.mimsave('frames/movie.gif', images)

gifPath = Path("frames/movie.gif")

with open(gifPath,'rb') as f:

display(Image(data=f.read(), format='png'))

Video 13: Play with it#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Play_with_it_Video")

Exercise 3.3: Tweak your Network#

You can now play around with the network a little bit to get a feeling of what different parameters are doing. Here are some ideas what you could try:

Increase or decrease the number of epochs for training

Increase or decrease the size of the hidden layer

Add one additional hidden layer

Can you get the network to better fit the data?

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Tweak_your_Network_Discussion")

Video 14: XOR Widget#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_XOR_widget_Video")

Exclusive OR (XOR) logical operation gives a true (1) output when the number of true inputs is odd. That is, a true output result if one, and only one, of the inputs to the gate is true. If both inputs are false (0) or both are true or false output results. Mathematically speaking, XOR represents the inequality function, i.e., the output is true if the inputs are not alike; otherwise, the output is false.

In case of two inputs (\(X\) and \(Y\)) the following truth table is applied:

Here, with 0, we denote False, and with 1 we denote True in boolean terms.

Interactive Demo 3.3: Solving XOR#

Here we use an open source and famous visualization widget developed by Tensorflow team available here.

Play with the widget and observe if you can solve the continuous XOR dataset.

Now add one hidden layer with three units, play with the widget, and set weights by hand to solve this dataset perfectly.

For the second part, you should set the weights by clicking on the connections and either type the value or use the up and down keys to change it by one increment. You could also do the same for the biases by clicking on the tiny square to each neuron’s bottom left. Even though there are infinitely many solutions, a neat solution when \(f(x)\) is ReLU is:

Try to set the weights and biases to implement this function after you played enough :)

Play with the parameters to solve XOR

Do you think we can solve the discrete XOR (only 4 possibilities) with only 2 hidden units?

Show code cell source

# @markdown Do you think we can solve the discrete XOR (only 4 possibilities) with only 2 hidden units?

w1_min_xor = 'Select' # @param ['Select', 'Yes', 'No']

if w1_min_xor == 'Yes':

print("Awesome. Indeed, yes. We take the two points for which the output should be 1 and dedicate one of the hidden units to each of them. Each of theses ReLU functions are diagonal and tuned so that only for one of those two points the output is 1. In the end, we add these two together. And voila - discrete xor.")

elif w1_min_xor == 'No':

print("How about giving it another try?")

else:

print("Select 'Yes' or 'No'!")

Select 'Yes' or 'No'!

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_XOR_Interactive_Demo")

Section 4: Ethics And Course Info#

Video 15: Ethics#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Ethics_Video")

Video 16: Be a group#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Be_a_group_Video")

Video 17: Syllabus#

Submit your feedback#

Show code cell source

# @title Submit your feedback

content_review(f"{feedback_prefix}_Syllabus_Video")

Meet our lecturers#

Week 1: the building blocks#

Week 2: making things work#

Week 3: more magic#

Now, go to the visualization of ICLR papers. Read a few abstracts. Look at the various clusters. Where do you see yourself in this map?

Bonus - 60 years of Machine Learning Research in one Plot#

By Hendrik Strobelt (MIT-IBM Watson AI Lab) with support from Benjamin Hoover.

In this notebook we visualize a subset* of 3,300 articles retreived from the AllenAI S2ORC dataset. We represent each paper by a position that is output of a dimensionality reduction method applied to a vector representation of each paper. The vector representation is the output of a neural network.

Note: The selection is very biased on the keywords and methodology we used to filter. Please see the details section to learn about what we did.

Install and Import altair and vega_datasets.#

Show code cell source

# @title Install and Import `altair` and `vega_datasets`.

!pip install altair vega_datasets --quiet

import altair as alt # altair is defining data visualizations

# Source data files

# Position data file maps ID to x,y positions

# original link: http://gltr.io/temp/ml_regexv1_cs_ma_citation+_99perc.pos_umap_cosine_100_d0.1.json

POS_FILE = 'https://osf.io/qyrfn/download'

# original link: http://gltr.io/temp/ml_regexv1_cs_ma_citation+_99perc_clean.csv

# Metadata file maps ID to title, abstract, author,....

META_FILE = 'https://osf.io/vfdu6/download'

# data loading and wrangling

def load_data():

"""

Loading the data

Args:

None

Returns:

Merged read dataFrame combining id and paper_id;

"""

positions = pd.read_json(POS_FILE)

positions[['x', 'y']] = positions['pos'].to_list()

meta = pd.read_csv(META_FILE)

return positions.merge(meta, left_on='id', right_on='paper_id')

# load data

data = load_data()

---------------------------------------------------------------------------

HTTPError Traceback (most recent call last)

Cell In[102], line 32

28 return positions.merge(meta, left_on='id', right_on='paper_id')

31 # load data

---> 32 data = load_data()

Cell In[102], line 25, in load_data()

15 def load_data():

16 """

17 Loading the data

18

(...)

23 Merged read dataFrame combining id and paper_id;

24 """

---> 25 positions = pd.read_json(POS_FILE)

26 positions[['x', 'y']] = positions['pos'].to_list()

27 meta = pd.read_csv(META_FILE)

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/pandas/io/json/_json.py:791, in read_json(path_or_buf, orient, typ, dtype, convert_axes, convert_dates, keep_default_dates, precise_float, date_unit, encoding, encoding_errors, lines, chunksize, compression, nrows, storage_options, dtype_backend, engine)

788 if convert_axes is None and orient != "table":

789 convert_axes = True

--> 791 json_reader = JsonReader(

792 path_or_buf,

793 orient=orient,

794 typ=typ,

795 dtype=dtype,

796 convert_axes=convert_axes,

797 convert_dates=convert_dates,

798 keep_default_dates=keep_default_dates,

799 precise_float=precise_float,

800 date_unit=date_unit,

801 encoding=encoding,

802 lines=lines,

803 chunksize=chunksize,

804 compression=compression,

805 nrows=nrows,

806 storage_options=storage_options,

807 encoding_errors=encoding_errors,

808 dtype_backend=dtype_backend,

809 engine=engine,

810 )

812 if chunksize:

813 return json_reader

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/pandas/io/json/_json.py:904, in JsonReader.__init__(self, filepath_or_buffer, orient, typ, dtype, convert_axes, convert_dates, keep_default_dates, precise_float, date_unit, encoding, lines, chunksize, compression, nrows, storage_options, encoding_errors, dtype_backend, engine)

902 self.data = filepath_or_buffer

903 elif self.engine == "ujson":

--> 904 data = self._get_data_from_filepath(filepath_or_buffer)

905 self.data = self._preprocess_data(data)

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/pandas/io/json/_json.py:944, in JsonReader._get_data_from_filepath(self, filepath_or_buffer)

937 filepath_or_buffer = stringify_path(filepath_or_buffer)

938 if (

939 not isinstance(filepath_or_buffer, str)

940 or is_url(filepath_or_buffer)

941 or is_fsspec_url(filepath_or_buffer)

942 or file_exists(filepath_or_buffer)

943 ):

--> 944 self.handles = get_handle(

945 filepath_or_buffer,

946 "r",

947 encoding=self.encoding,

948 compression=self.compression,

949 storage_options=self.storage_options,

950 errors=self.encoding_errors,

951 )

952 filepath_or_buffer = self.handles.handle

953 elif (

954 isinstance(filepath_or_buffer, str)

955 and filepath_or_buffer.lower().endswith(

(...)

958 and not file_exists(filepath_or_buffer)

959 ):

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/pandas/io/common.py:728, in get_handle(path_or_buf, mode, encoding, compression, memory_map, is_text, errors, storage_options)

725 codecs.lookup_error(errors)

727 # open URLs

--> 728 ioargs = _get_filepath_or_buffer(

729 path_or_buf,

730 encoding=encoding,

731 compression=compression,

732 mode=mode,

733 storage_options=storage_options,

734 )

736 handle = ioargs.filepath_or_buffer

737 handles: list[BaseBuffer]

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/pandas/io/common.py:384, in _get_filepath_or_buffer(filepath_or_buffer, encoding, compression, mode, storage_options)

382 # assuming storage_options is to be interpreted as headers

383 req_info = urllib.request.Request(filepath_or_buffer, headers=storage_options)

--> 384 with urlopen(req_info) as req:

385 content_encoding = req.headers.get("Content-Encoding", None)

386 if content_encoding == "gzip":

387 # Override compression based on Content-Encoding header

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/pandas/io/common.py:289, in urlopen(*args, **kwargs)

283 """

284 Lazy-import wrapper for stdlib urlopen, as that imports a big chunk of

285 the stdlib.

286 """

287 import urllib.request

--> 289 return urllib.request.urlopen(*args, **kwargs)

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:214, in urlopen(url, data, timeout, cafile, capath, cadefault, context)

212 else:

213 opener = _opener

--> 214 return opener.open(url, data, timeout)

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:523, in OpenerDirector.open(self, fullurl, data, timeout)

521 for processor in self.process_response.get(protocol, []):

522 meth = getattr(processor, meth_name)

--> 523 response = meth(req, response)

525 return response

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:632, in HTTPErrorProcessor.http_response(self, request, response)

629 # According to RFC 2616, "2xx" code indicates that the client's

630 # request was successfully received, understood, and accepted.

631 if not (200 <= code < 300):

--> 632 response = self.parent.error(

633 'http', request, response, code, msg, hdrs)

635 return response

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:555, in OpenerDirector.error(self, proto, *args)

553 http_err = 0

554 args = (dict, proto, meth_name) + args

--> 555 result = self._call_chain(*args)

556 if result:

557 return result

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:494, in OpenerDirector._call_chain(self, chain, kind, meth_name, *args)

492 for handler in handlers:

493 func = getattr(handler, meth_name)

--> 494 result = func(*args)

495 if result is not None:

496 return result

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:747, in HTTPRedirectHandler.http_error_302(self, req, fp, code, msg, headers)

744 fp.read()

745 fp.close()

--> 747 return self.parent.open(new, timeout=req.timeout)

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:523, in OpenerDirector.open(self, fullurl, data, timeout)

521 for processor in self.process_response.get(protocol, []):

522 meth = getattr(processor, meth_name)

--> 523 response = meth(req, response)

525 return response

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:632, in HTTPErrorProcessor.http_response(self, request, response)

629 # According to RFC 2616, "2xx" code indicates that the client's

630 # request was successfully received, understood, and accepted.

631 if not (200 <= code < 300):

--> 632 response = self.parent.error(

633 'http', request, response, code, msg, hdrs)

635 return response

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:561, in OpenerDirector.error(self, proto, *args)

559 if http_err:

560 args = (dict, 'default', 'http_error_default') + orig_args

--> 561 return self._call_chain(*args)

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:494, in OpenerDirector._call_chain(self, chain, kind, meth_name, *args)

492 for handler in handlers:

493 func = getattr(handler, meth_name)

--> 494 result = func(*args)

495 if result is not None:

496 return result

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/urllib/request.py:641, in HTTPDefaultErrorHandler.http_error_default(self, req, fp, code, msg, hdrs)

640 def http_error_default(self, req, fp, code, msg, hdrs):

--> 641 raise HTTPError(req.full_url, code, msg, hdrs, fp)

HTTPError: HTTP Error 308: Permanent Redirect

Define Visualization using ALtair#

Show code cell source

# @title Define Visualization using ALtair

YEAR_PERIOD = "quinquennial" # @param

selection = alt.selection_multi(fields=[YEAR_PERIOD], bind='legend')

data[YEAR_PERIOD] = (data["year"] / 5.0).apply(np.floor) * 5

chart = alt.Chart(data[["x", "y", "authors", "title", YEAR_PERIOD, "citation_count"]], width=800,

height=800).mark_circle(radius=2, opacity=0.2).encode(

alt.Color(YEAR_PERIOD+':O',

scale=alt.Scale(scheme='viridis', reverse=False, clamp=True, domain=list(range(1955,2020,5))),

# legend=alt.Legend(title='Total Records')

),

alt.Size('citation_count',

scale=alt.Scale(type="pow", exponent=1, range=[15, 300])

),

alt.X('x:Q',

scale=alt.Scale(zero=False), axis=alt.Axis(labels=False)

),

alt.Y('y:Q',

scale=alt.Scale(zero=False), axis=alt.Axis(labels=False)

),

tooltip=['title', 'authors'],

# size='citation_count',

# color="decade:O",

opacity=alt.condition(selection, alt.value(.8), alt.value(0.2)),

).add_selection(

selection

).interactive()

---------------------------------------------------------------------------

KeyError Traceback (most recent call last)

File /opt/hostedtoolcache/Python/3.9.25/x64/lib/python3.9/site-packages/pandas/core/indexes/base.py:3812, in Index.get_loc(self, key)

3811 try:

-> 3812 return self._engine.get_loc(casted_key)

3813 except KeyError as err:

File pandas/_libs/index.pyx:167, in pandas._libs.index.IndexEngine.get_loc()

File pandas/_libs/index.pyx:196, in pandas._libs.index.IndexEngine.get_loc()